Executive summary

Embrace surveyed web and mobile teams to find out where they really stand on observability and AI. This report, based on responses from engineering professionals across 16 countries, provides a snapshot of the current state of observability maturity, tooling, and AI adoption. Key findings include:

- Most teams (74%) are stuck in the observability middle. They rate themselves at maturity levels 2 or 3 (on a scale of 1-5), meaning they have partial instrumentation but lack full end-to-end visibility. In fact, only 5% have fully correlated frontend-to-backend observability. The biggest capability gap is tying frontend issues to backend root causes.

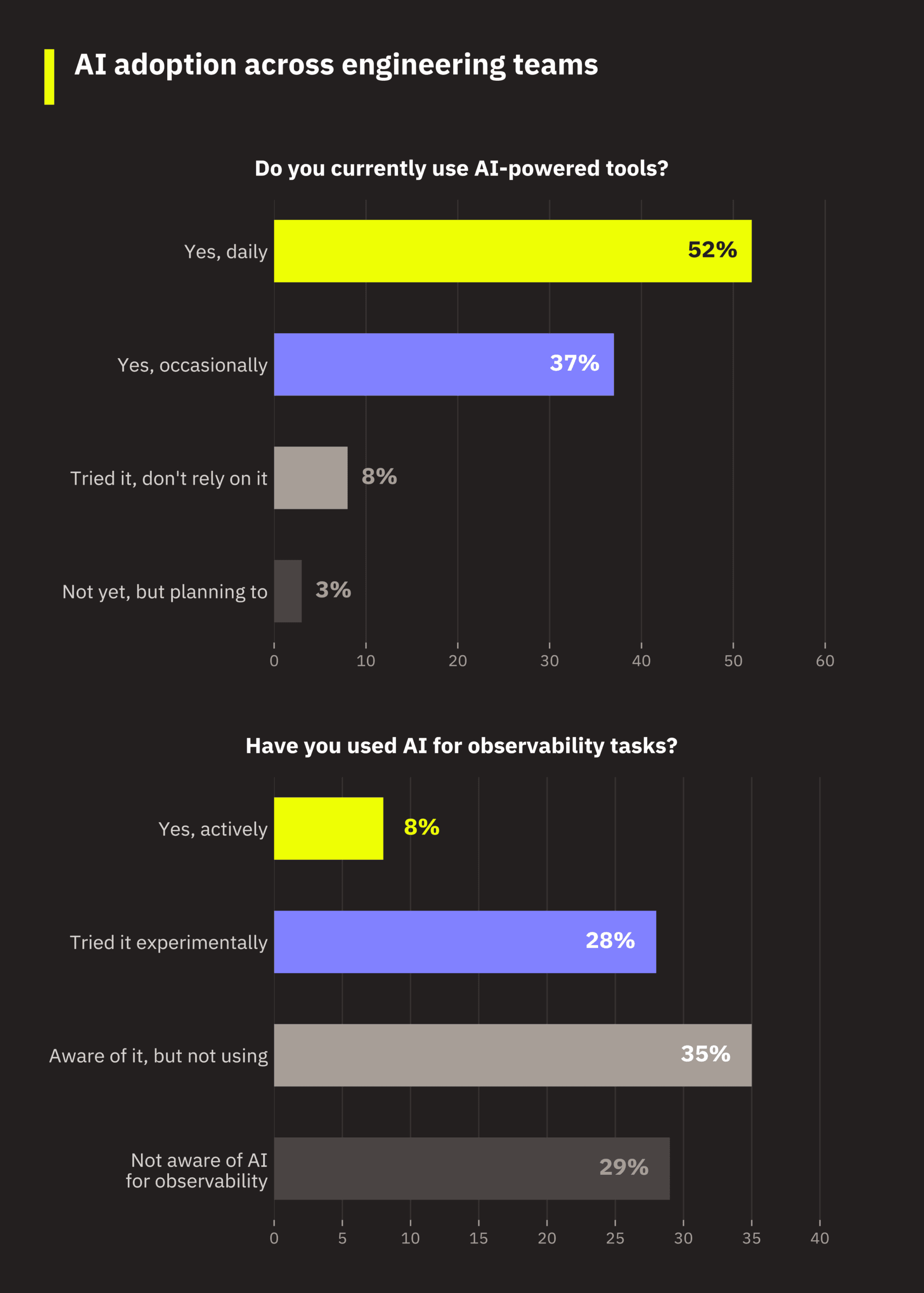

- AI adoption is high for development, but not for observability. 89% of respondents actively use AI tools in their workflow (52% daily), but only 8% use AI for observability tasks. 29% aren’t even aware AI can be applied to observability. The barrier isn’t sentiment. Engineers are broadly positive about AI, but adoption for observability hasn’t kept pace with adoption for development.

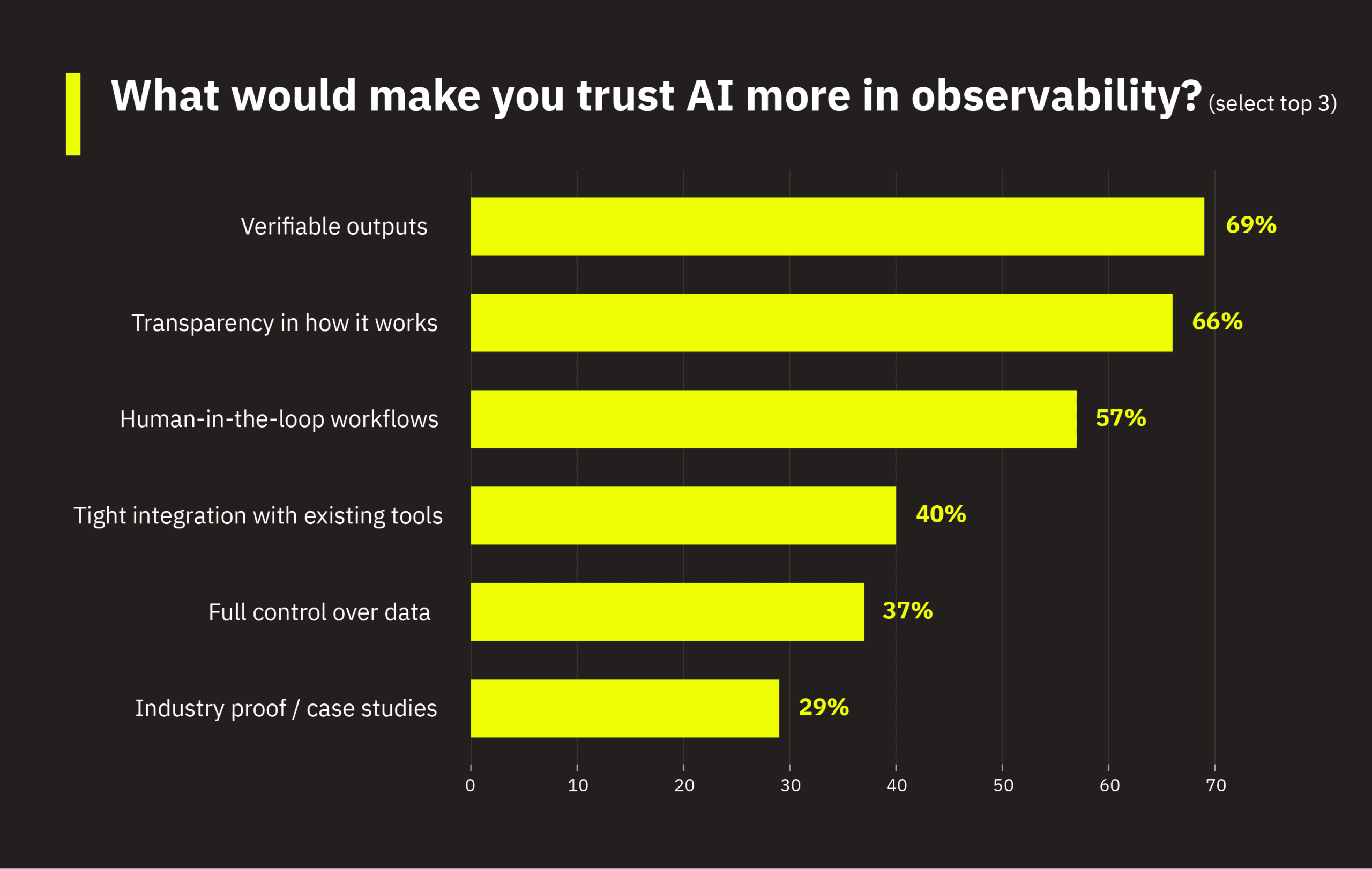

- Teams believe AI is the future of observability, but demand trust first. 72% say AI will be very important or mission-critical to observability in 2–3 years. But verifiable outputs (69%), transparency (66%), and human-in-the-loop workflows (57%) are non-negotiable preconditions for increased adoption.

- Mobile and web teams operate in largely separate worlds. They differ in several key categories, including tooling, constraints, and AI readiness. Mobile teams lag behind on observability setup and AI awareness but show strong demand for root cause analysis. Web teams are more instrumented and further along in AI experimentation.

- Managers and ICs diagnose observability challenges differently. Engineering managers cite resource and budget constraints, but client-side engineers cite tooling and automation gaps. Leadership has more awareness of using AI for observability, compared to practitioners. Both roles describe the same challenges that affect observability maturity, just from different vantage points.

1. Survey population and methodology

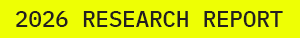

An online survey of 300 verified respondents was fielded in January-February 2026. Respondents include mobile engineers, frontend web engineers, full-stack engineers, engineering managers, directors, and product managers across e-commerce, media, SaaS, gaming, fintech, and other industries. They span 16 countries, with the largest concentrations in the United States (28%), United Kingdom (20%), and across continental Europe (60% combined).

Roles are well-distributed: Engineering managers/directors and full-stack engineers each comprise 22%, followed by mobile engineers and frontend engineers (web) at 17% each, and product managers at 12%. Organization sizes skew mid-to-large, with 58% in engineering orgs of 51 to 1,000 people. The industry mix is led by e-commerce/retail (31%) and media/streaming (22%), which are both sectors where frontend performance directly impacts revenue. In fact, 93% of respondents say frontend performance is at least somewhat critical to their business outcomes.

In terms of platform segmentation, respondents were classified based on the application types they work on: 46% work exclusively on web applications, 29% work exclusively on mobile, and 22% work across both platforms. The cross-platform group skews heavily toward managers and PMs, reflecting their broader organizational scope.

2. Observability maturity

2.1 The majority of teams are stuck in the middle

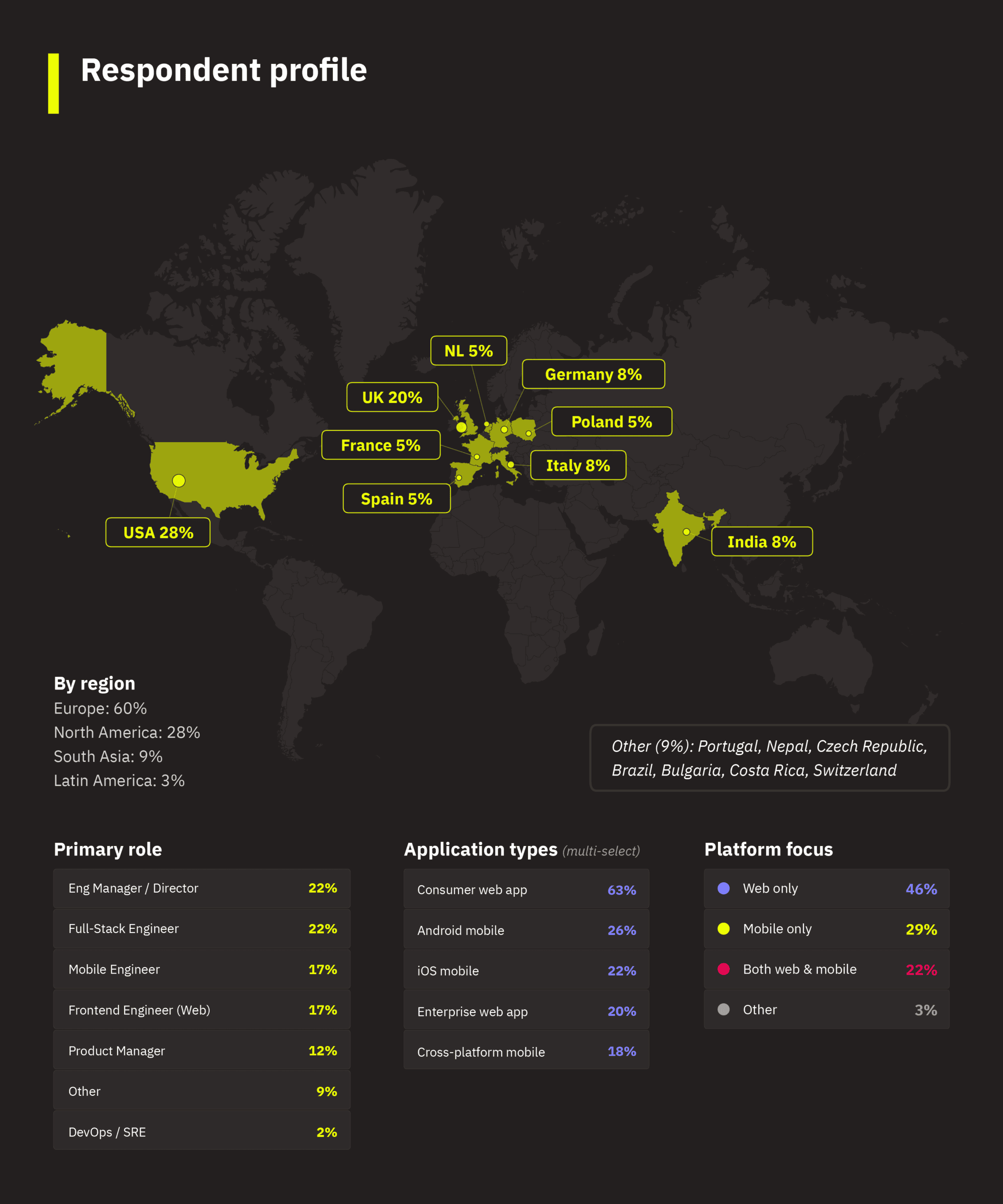

Respondents were asked to rate their observability maturity on a scale of 1-5, with the following guidelines:

- 1 – Reactive: We mostly rely on user complaints and crash reports.

- 2 – Mostly reactive, somewhat proactive

- 3 – Proactive: We detect issues early, and we confidently scale apps and infrastructure without disruptions.

- 4 – Mostly proactive, somewhat strategic

- 5 – Strategic: We correlate frontend and backend data and tie performance to organizational KPIs.

Want to know where your team’s observability stands? If you’re like most respondents, you’re somewhere in the middle. Almost three-quarters of respondents are in levels 2 or 3, with 48% describing their current observability as “some performance tracing and dashboards.” Only 8% view themselves as truly strategic, where they can connect performance directly to business outcomes. This is supported in the observability setup data, with only 5% of respondents having fully correlated frontend-to-backend observability capabilities.

2.2 The weakest link: Tying frontend issues to backend causes

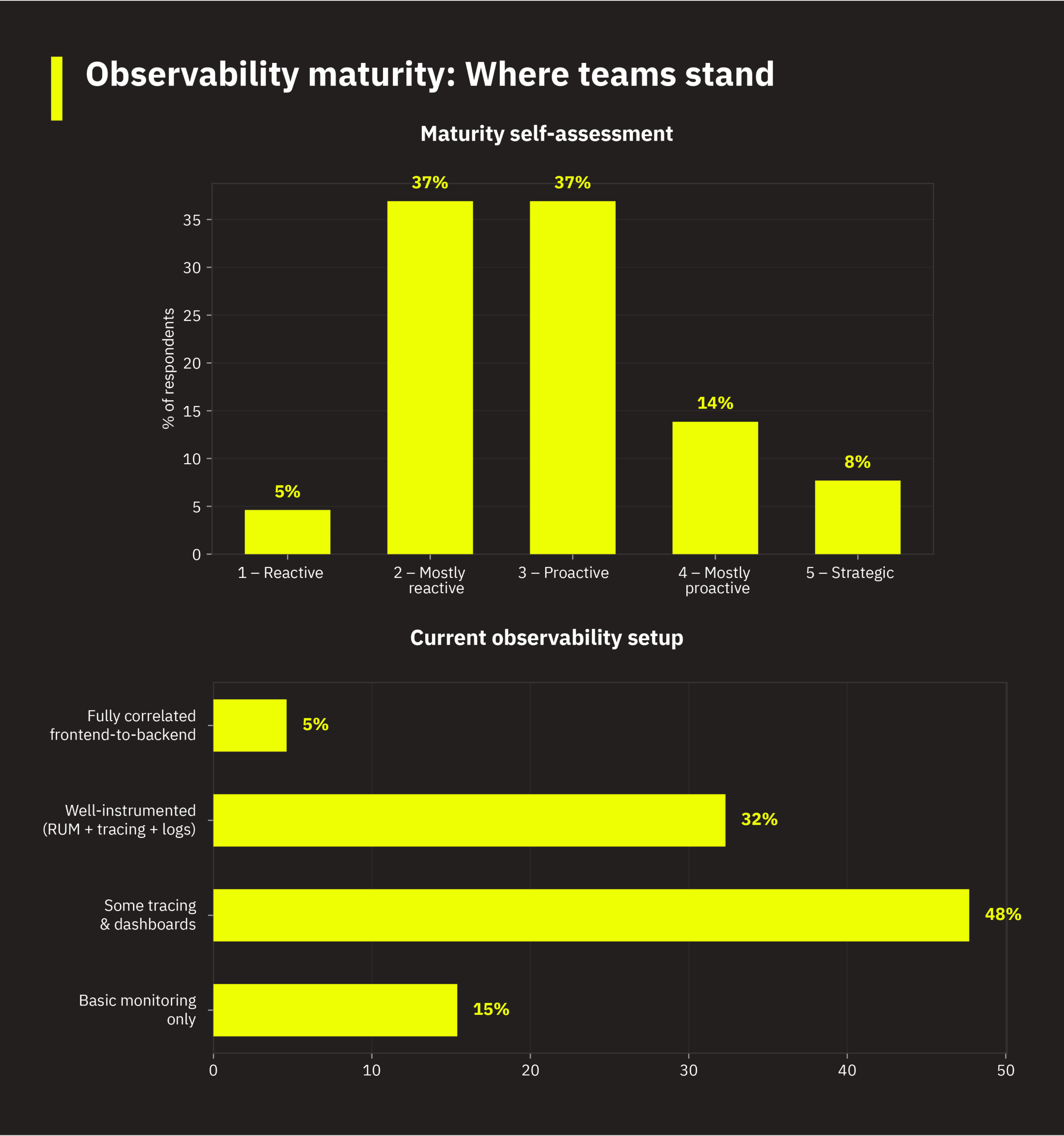

How confident is your team across core observability capabilities? For most respondents, the answer is “confident”, but rarely “very confident.” And there are some meaningful blind spots.

The weakest link is tying frontend issues to backend causes. This dimension has the highest “neutral” concentration (37%) and the lowest “very confident” rate (8%). These teams frequently struggle with an observability explanation gap. They can generally detect that something is wrong, but they just can’t explain why. You’ll see this gap running through this dataset. For example, the most-demanded AI use cases are pattern detection, regression detection, and root cause analysis, which all target this same gap.

2.3 What teams use to monitor mobile and web performance

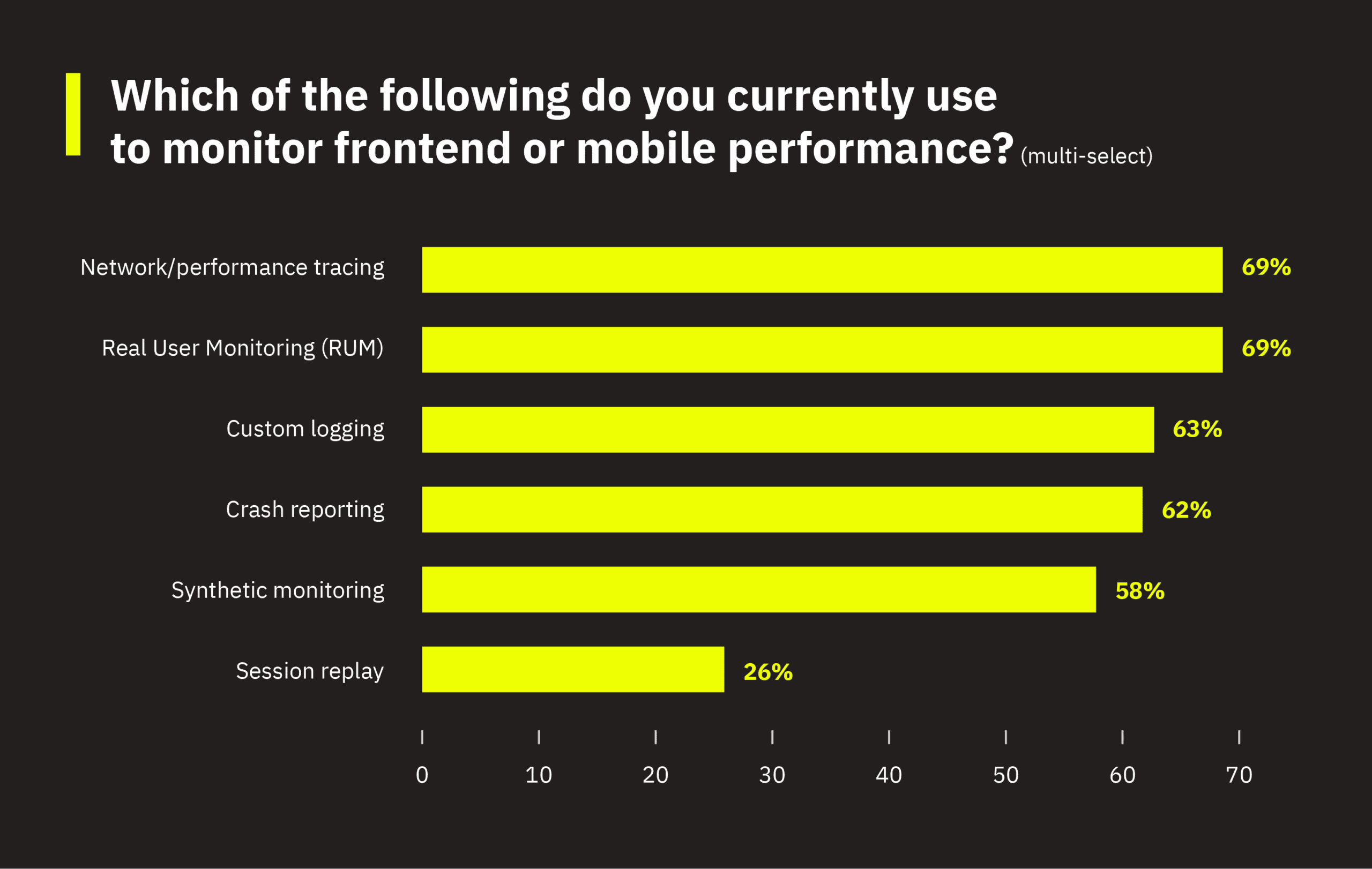

Network/performance tracing and Real User Monitoring (RUM) are the most widely adopted monitoring methods, each used by 69% of respondents. Crash reporting (62%), custom logging (63%), and synthetic monitoring (58%) form the next tier. Session replay remains relatively niche at 26%. If your team uses tracing and RUM but hasn’t adopted session replay, you’re in line with the majority.

2.4 Lack of time and resources is the greatest barrier to better observability

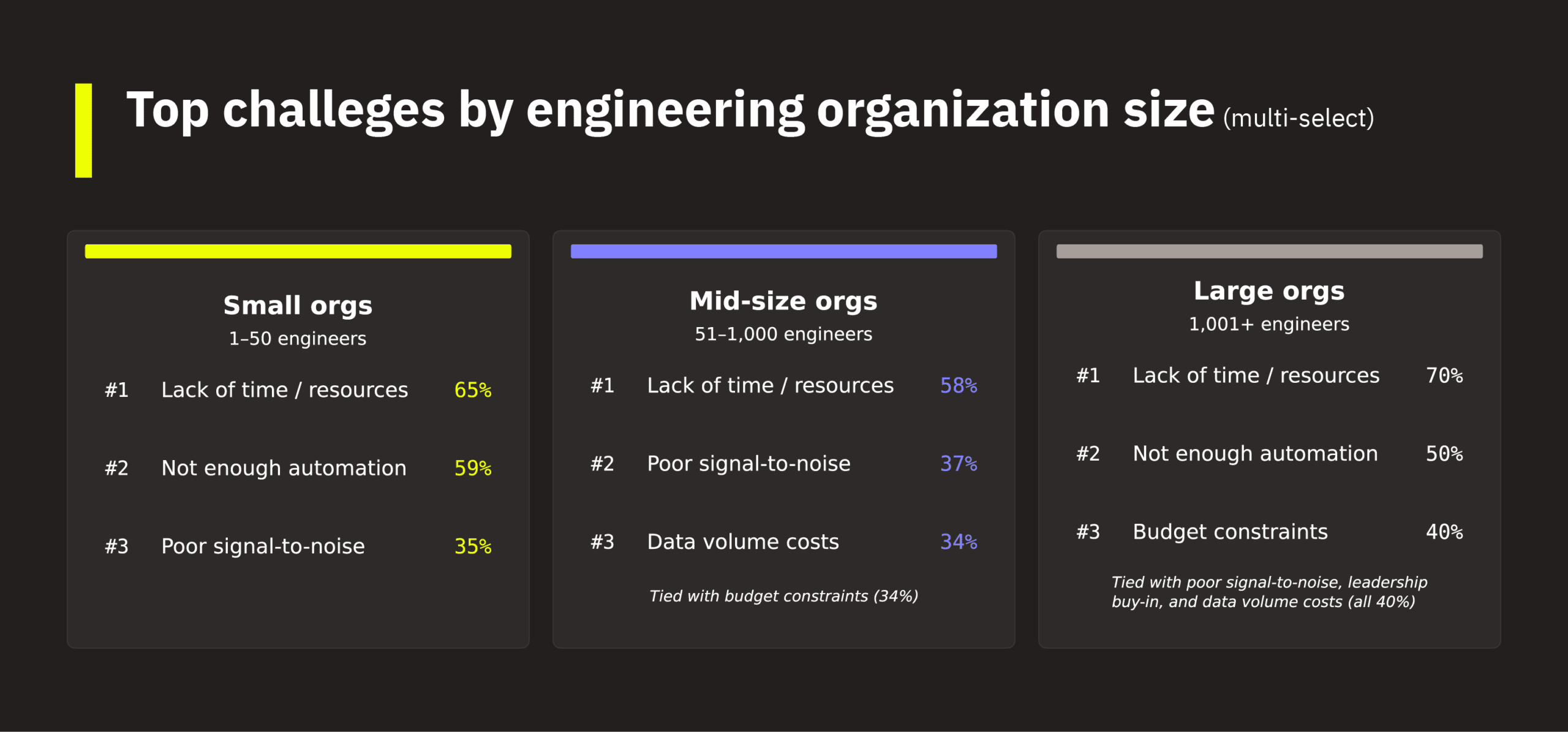

Almost two-thirds of respondents say that lack of time and resources is the biggest challenge in improving observability. This is followed by not enough automation (40%), poor signal-to-noise ratio (37%), budget constraints (31%), and data volume costs (29%).

The challenges shift depending on org size. Small teams (1–50 engineers) feel the automation gap most acutely (59% vs. 29% for mid-size) because they can’t compensate with headcount. Large orgs (1,001+) face a different set of problems: budget constraints (40%), data volume costs (40%), and leadership buy-in (40%) all spike. These are challenges that compound at scale, as enterprises wrestle with more tools, more data, and more organizational layers.

2.5 Observability maturity is a strong predictor of AI readiness

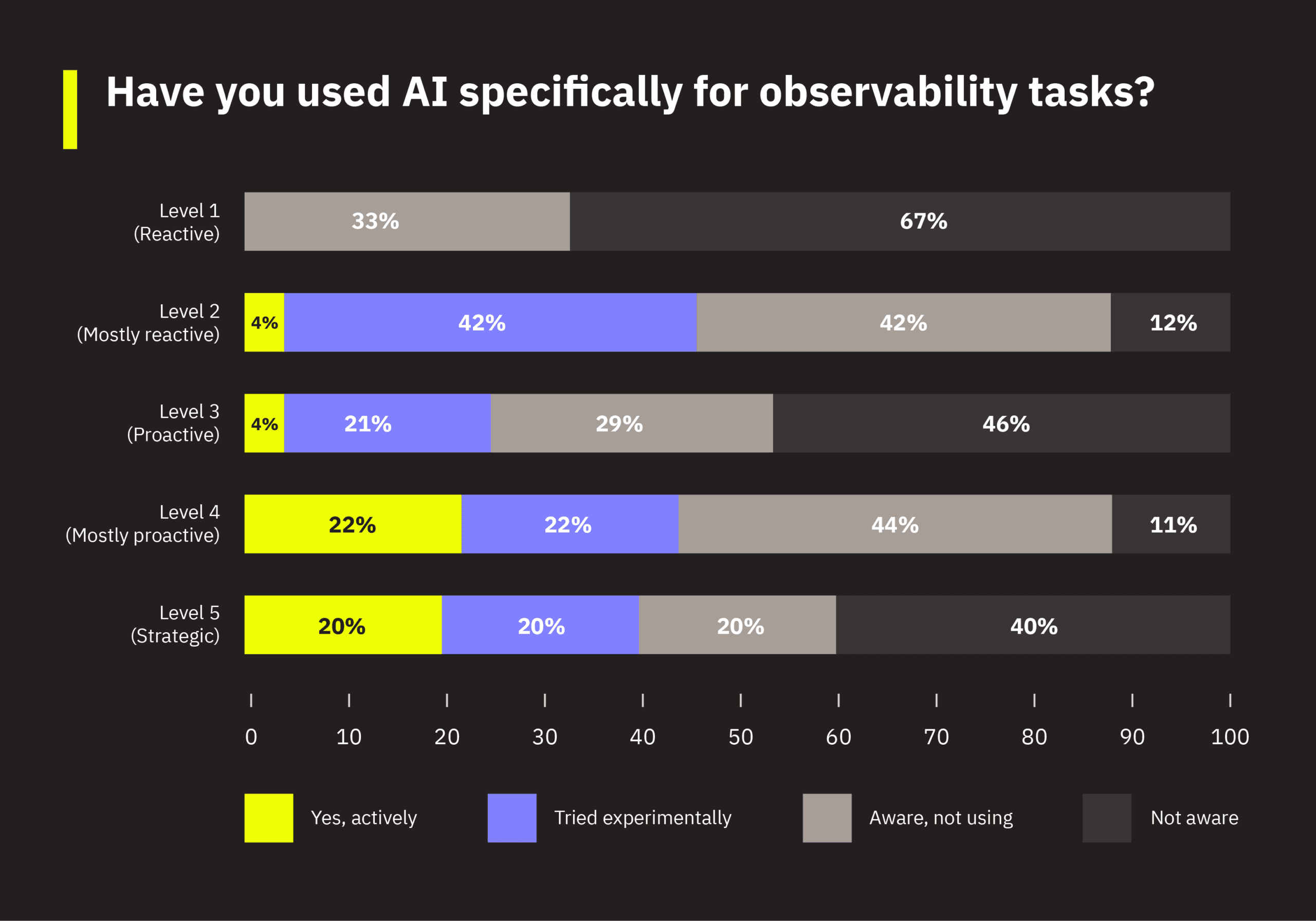

The survey also asked respondents about their awareness and use of AI specifically for observability tasks, ranging from “not aware” to “actively using.” We wanted to understand not just whether teams have heard of AI for observability, but whether there’s a pattern in who’s adopting it and who isn’t. (Section 4 covers AI adoption in detail.)

So, does your observability maturity predict how far along you are with AI? The data suggests a strong correlation here.

Level 1 (reactive) teams have the largest “not aware” segment at 67%, meaning two out of three haven’t heard of AI for observability. Level 3 is next at 46%, making them the largest absolute group of unaware respondents given their sample size. Level 2 teams are most likely to be in the “aware but not using” or “experimental” categories, which suggests these teams know AI for observability exists but are still figuring out how to start. At the other end, Levels 4 and 5 are the most likely to be actively using AI: 22% and 20% respectively, compared to just 4% at levels 2 and 3.

The takeaway is straightforward: If your observability foundations aren’t solid, AI adoption won’t happen. If your team is at level 2 or 3 and hasn’t started with AI for observability, that tracks with where most teams at your maturity level are today.

Where does your team fit?

Based on the patterns in this data, most teams fall into one of three common profiles:

These are common patterns, not rigid categories. Many teams will see elements of more than one profile. The sections that follow explore how these patterns vary by platform and role.

3. The platform divide: Mobile vs. web

Do mobile and web teams face the same observability challenges? The answer is a resounding no.

This section compares the mobile-only respondents (29% of the dataset) and web-only respondents (46% of the dataset) directly. If you’re on a mobile team, you’re more likely to be earlier in your observability maturity and less likely to have encountered AI for observability. You also probably have a strong demand for AI tooling for root cause analysis. If you’re on a web team, you’re more likely to have broader instrumentation and AI experimentation, but you may face more organizational constraints around buy-in and staffing.

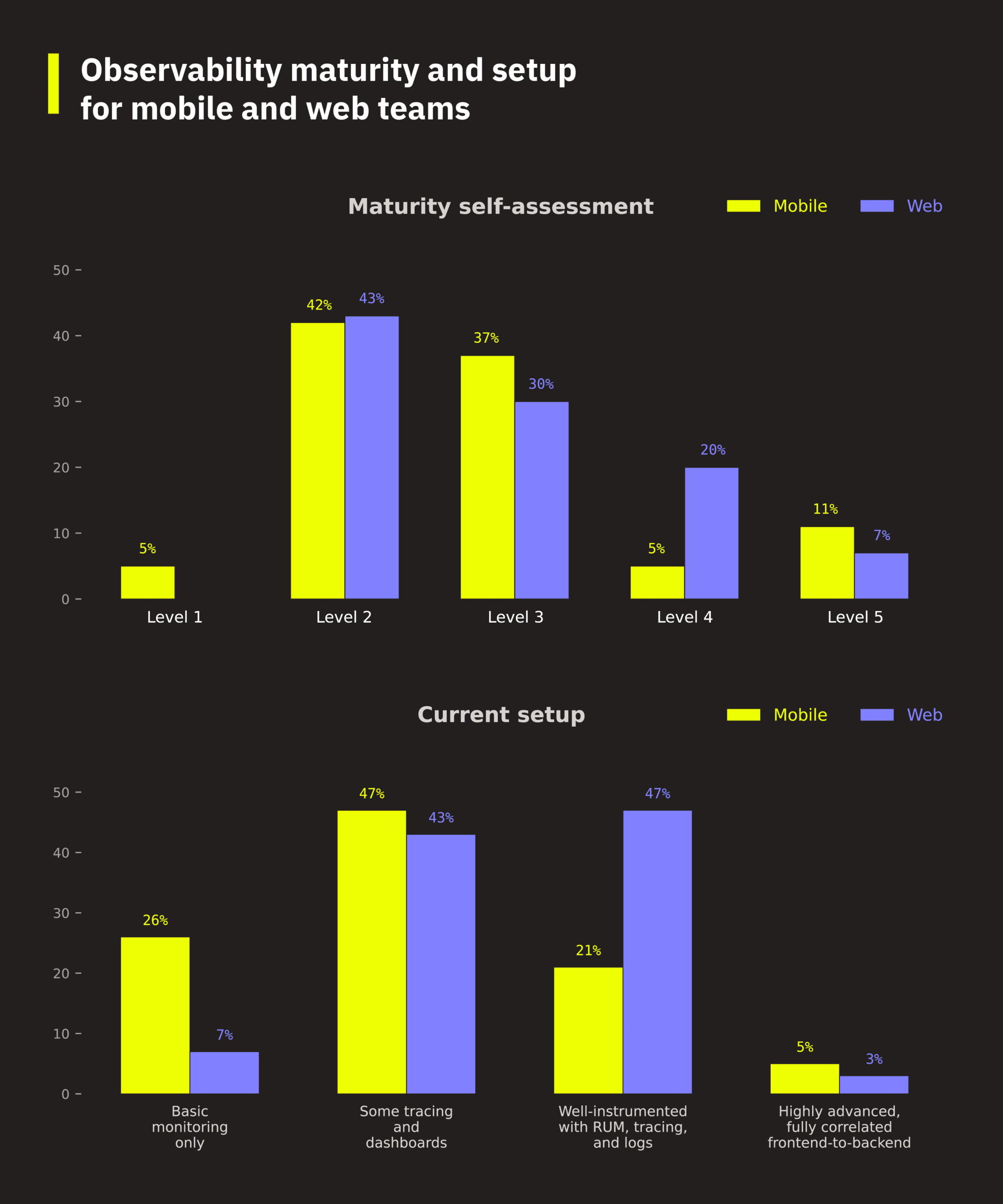

3.1 Web teams report much more advanced observability setups

Almost half of respondents from web teams describe their setup as “well-instrumented with RUM, tracing, and logs,” compared to just 21% of mobile teams. On the other end, 26% of mobile teams are still on basic monitoring only, versus just 7% of web teams. On the 1–5 maturity scale, web teams are four times more likely to rate themselves at level 4: 20% versus 5% for mobile.

3.2 Mobile and web teams build their observability around different foundations

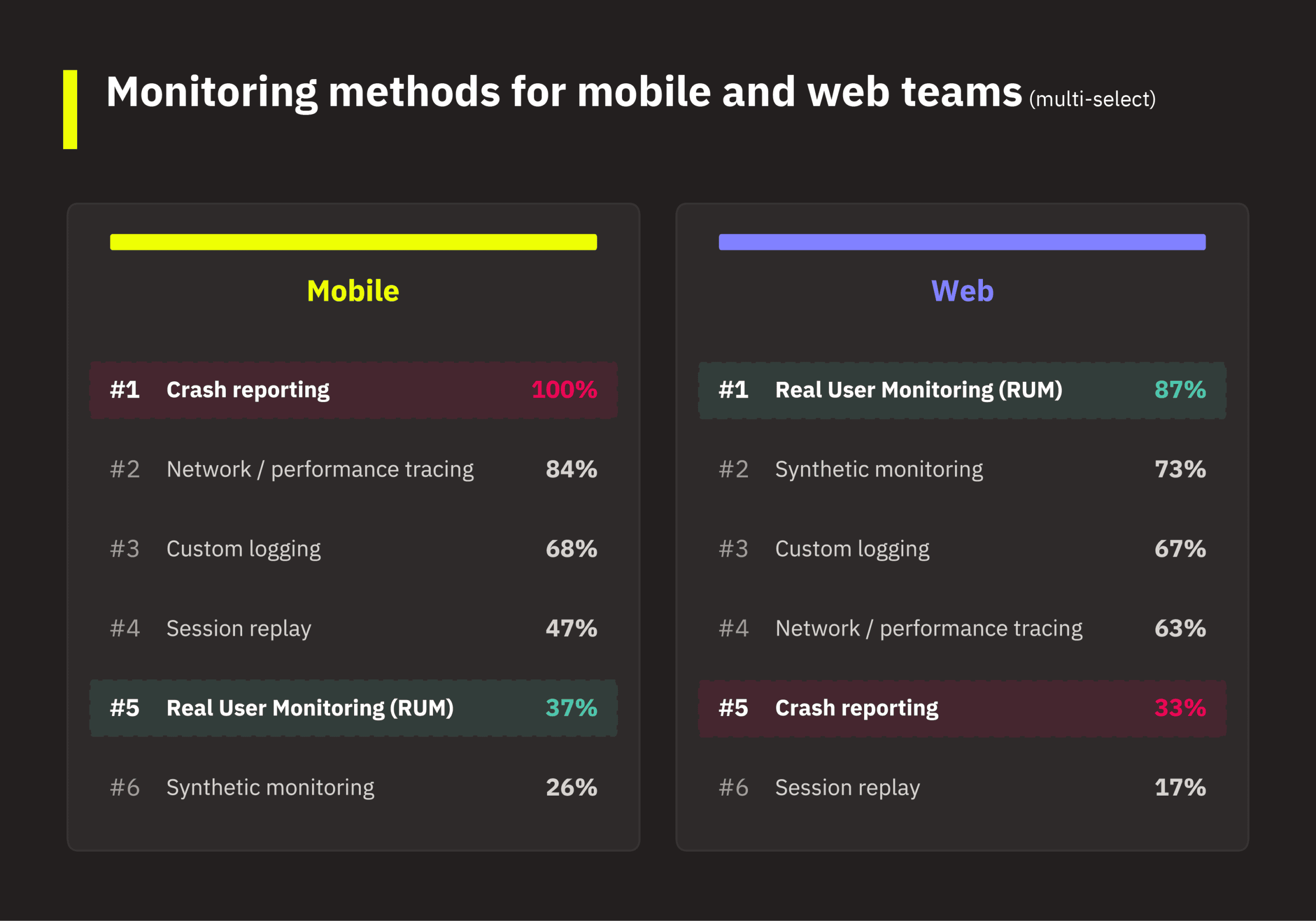

Mobile teams universally use crash reporting (100%) and lean heavily on network/performance tracing (84%) and session replay (47%). Web teams lead on real user monitoring (87%), synthetic monitoring (73%), and custom logging (67%).

The gaps also go both ways. RUM, a cornerstone of web observability, is used by only 37% of mobile teams. Crash reporting, a key component of mobile observability, is used by only 33% of web teams. Each platform has built its monitoring practice around the failure modes most common to its environment: crashes and network tracing for mobile, page performance and user flows for web.

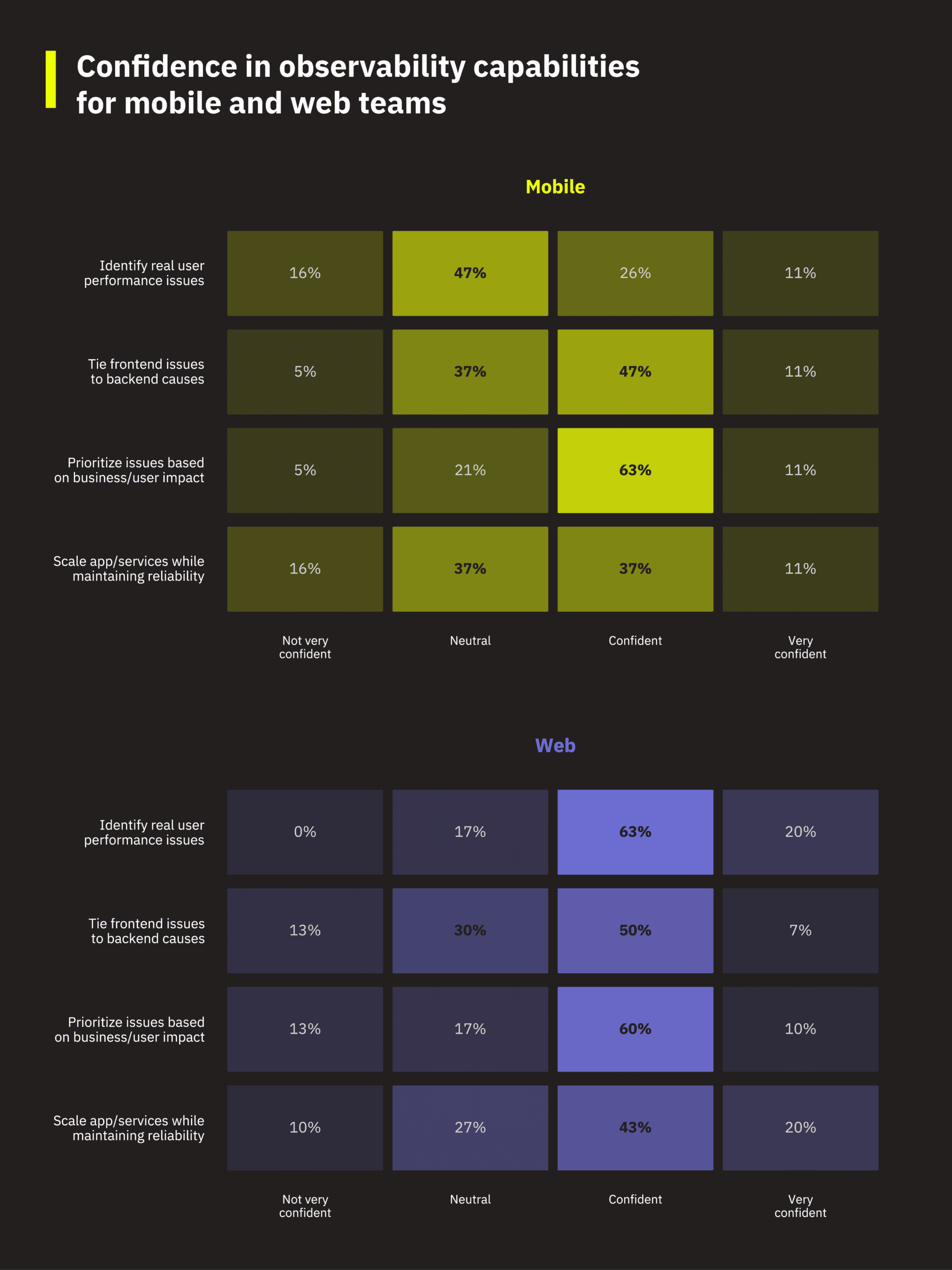

3.3 Mobile teams lag in confidence

Mobile and web teams feel most differently about their ability to identify real user performance issues. 83% of web respondents are confident or very confident here, compared to just 37% of mobile respondents. Nearly half of mobile engineers (47%) are neutral, suggesting they lack the tooling or data to understand what their users are actually experiencing. If you’re on a mobile team and feel less certain about real user performance than your web counterparts, you’re not alone.

On the remaining three capabilities (tying frontend to backend causes, prioritizing by business impact, and scaling reliably), the gap narrows, with mobile teams trailing by roughly 5–10 percentage points. The weakest capability shared by all teams is in tying frontend issues to backend causes, with mobile and web teams having similar profiles here (lower confidence and higher neutral rates). This is the explanation gap that was identified earlier in Section 2.2.

3.4 Web teams lag in leadership buy-in

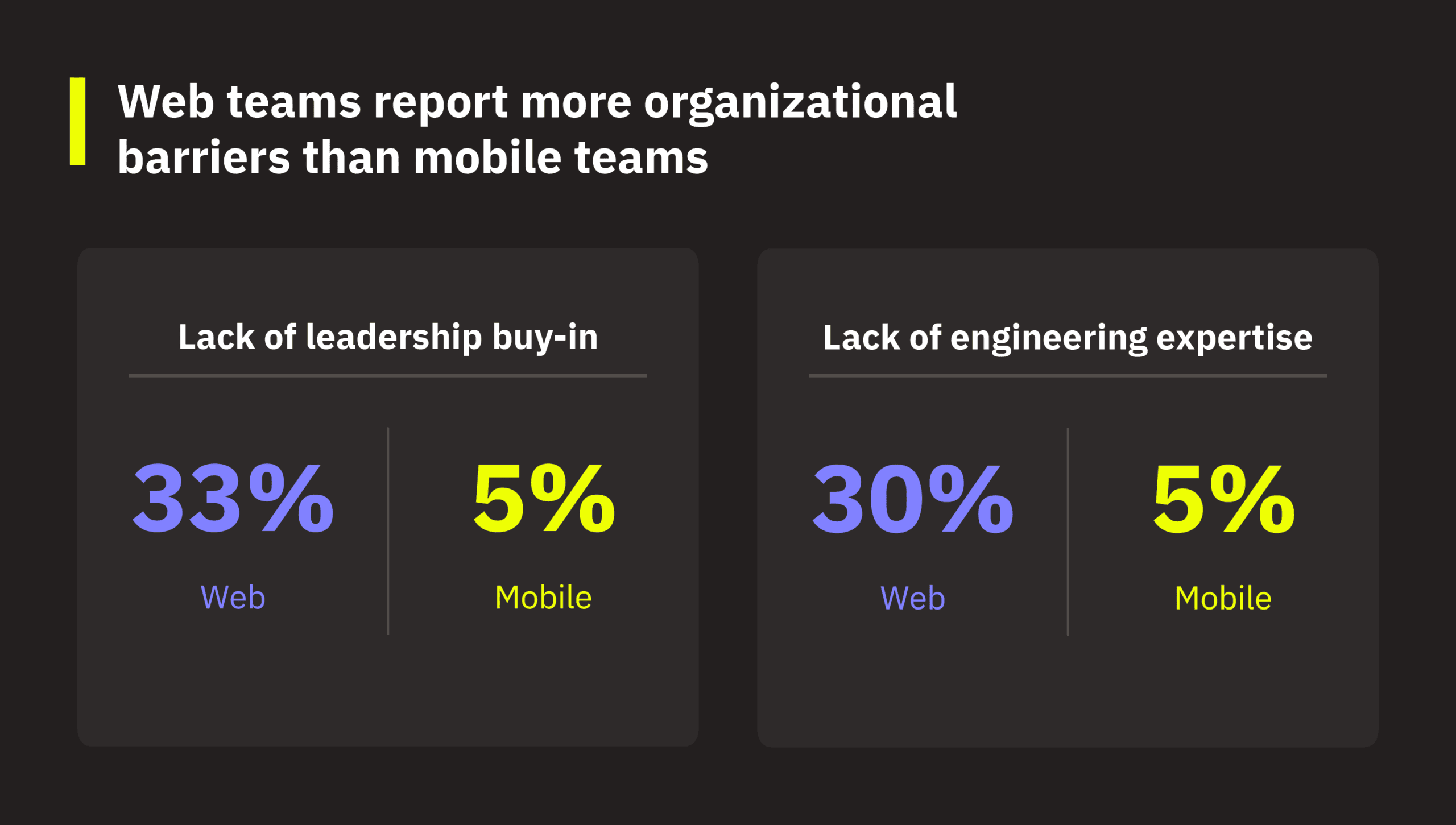

Mobile and web teams face qualitatively different barriers to achieving better observability. Both cite lack of time/resources as the top blocker (53% mobile, 63% web) and not enough automation as a close second (53% mobile, 40% web). Where they diverge is on organizational barriers: 33% of web teams cite lack of leadership buy-in and 30% cite lack of expertise, compared to just 5% each for mobile. Mobile teams are more likely to cite tool complexity (37% vs. 30%).

This suggests mobile teams are fighting the tooling itself, while web teams are fighting to get their organizations aligned around observability as a priority. It’s a different kind of friction, and it likely requires different solutions for each platform.

3.5 Web vs. mobile: Significant gaps in AI readiness

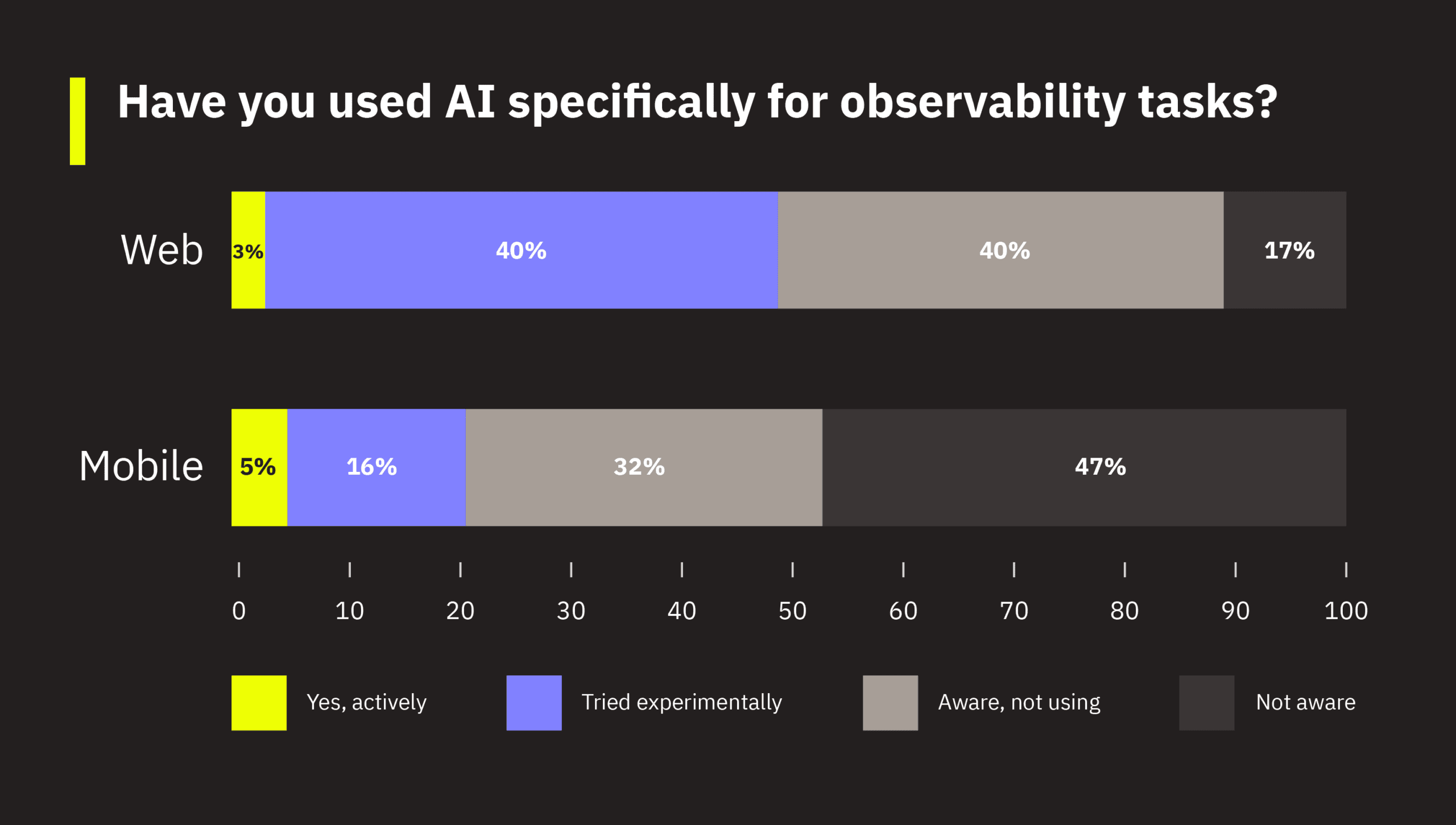

The biggest gap between mobile and web teams isn’t maturity or tooling; it’s AI awareness. 47% of mobile engineers aren’t even aware that AI can be applied to observability tasks, versus just 17% of web engineers. Web teams are also 2.5 times more likely to have experimented with AI (40% vs. 16%). If you’re on a mobile team and AI for observability isn’t on your radar yet, nearly half of your mobile peers are in the same position.

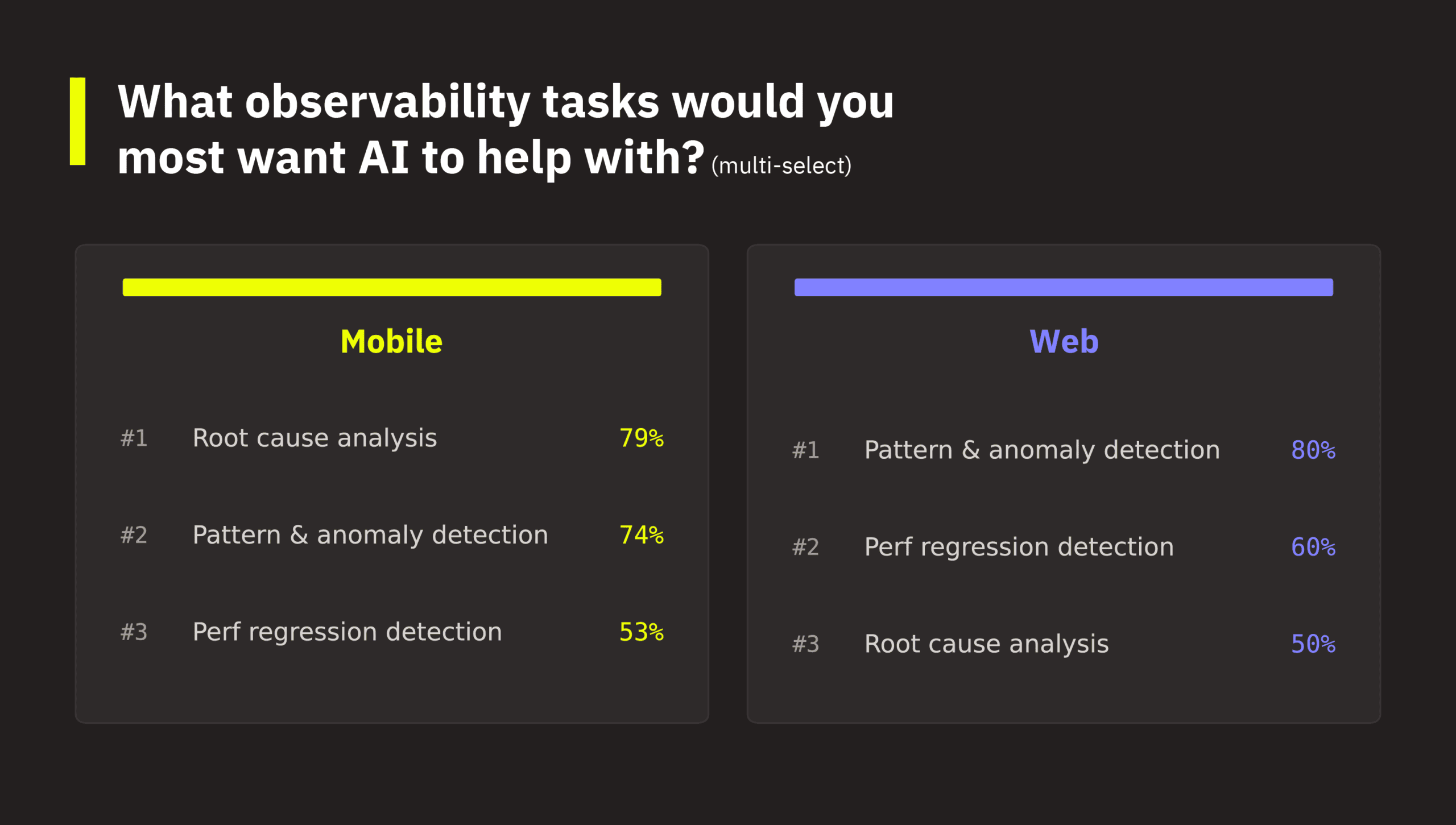

Mobile and web teams share the same top three observability tasks they’d most want AI to help with; however, they rank them differently. Root cause analysis is mobile’s top selection at 79%, significantly higher than web teams’ 50%. This makes sense, given that debugging user-impacting issues on mobile has significantly more complexity, spanning device fragmentation, OS versions, app versions, and network connectivity variations, to name a few. In contrast, web teams select pattern and anomaly detection as the most desired task for AI, followed by performance regression detection.

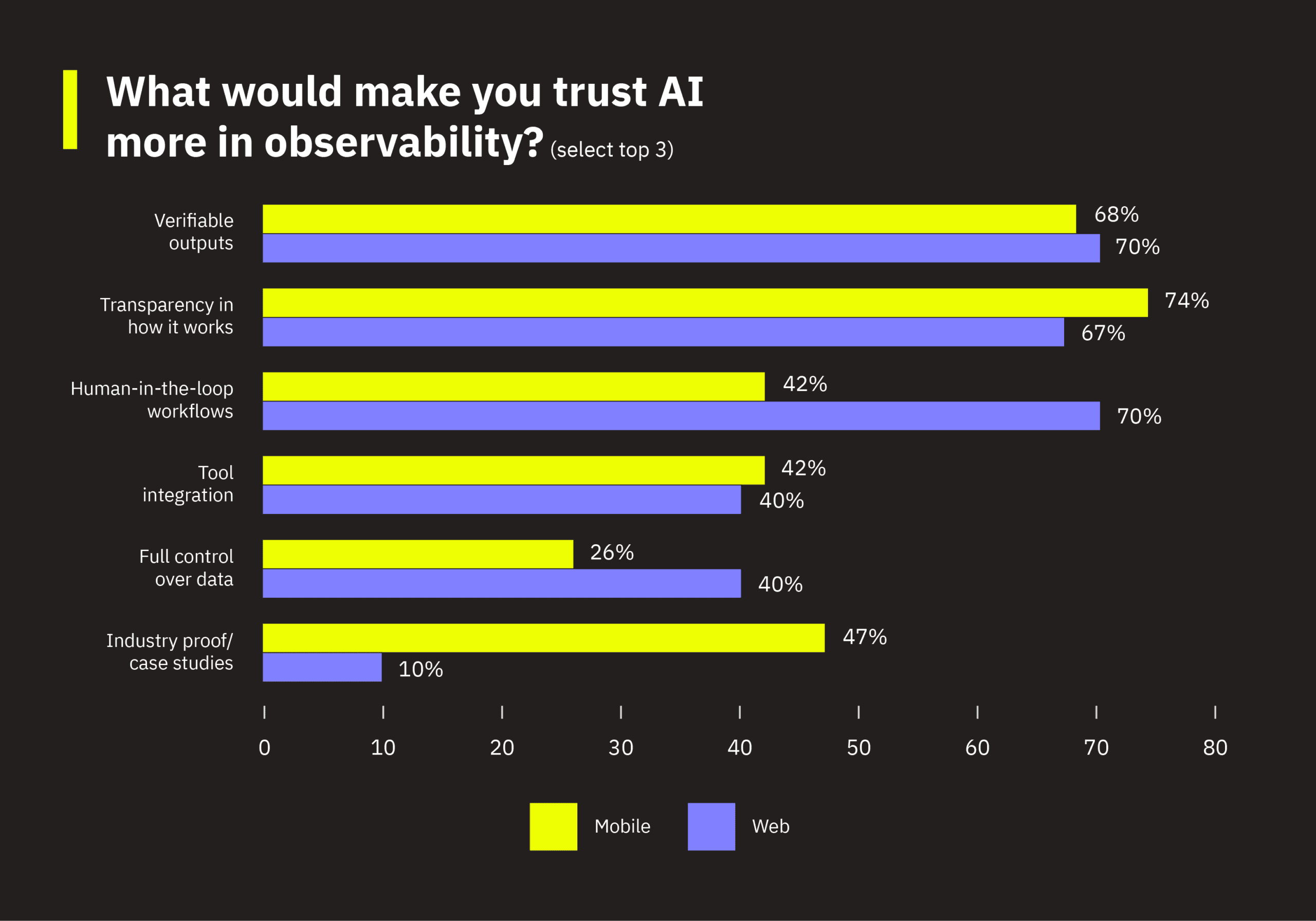

Trust factors differ accordingly. Both platforms value verifiable outputs and transparency roughly equally. But mobile teams rank industry proof and case studies far higher (47% vs. 10%), wanting to see others succeed before committing. Web teams prioritize human-in-the-loop workflows (70% vs. 42%) and full data control (40% vs. 26%), consistent with a group that’s further along in thinking about how to operationalize AI safely.

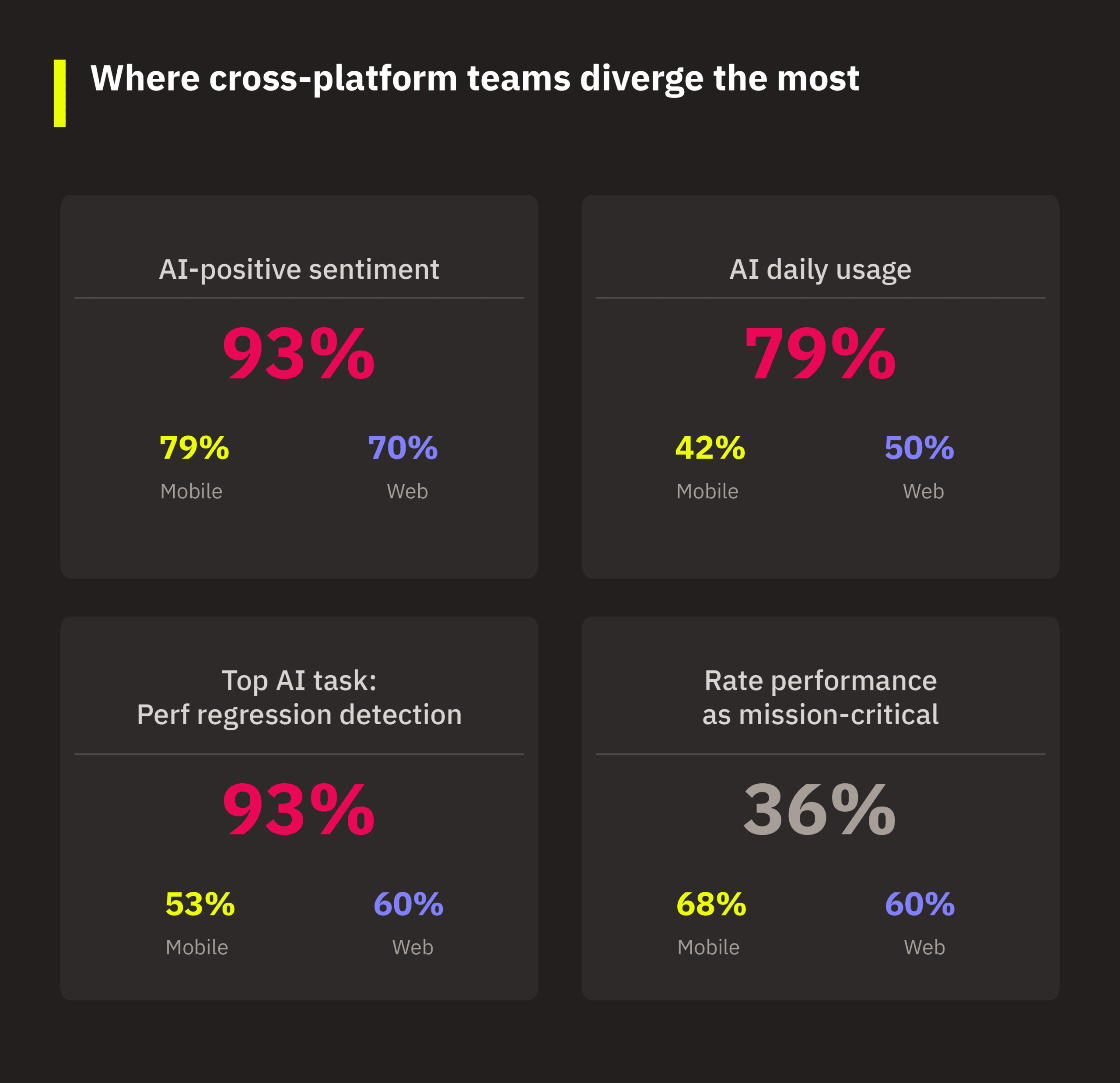

A note on cross-platform teams. The 22% of respondents who work across both web and mobile form a distinct cohort, heavily skewing toward leadership and cross-functional roles (e.g., engineering managers, directors, product managers).

Cross-platform respondents are the most AI-enthusiastic segment:

- 93% think positively about AI (vs. 79% mobile, 70% web).

- 79% use AI daily (vs. 42% mobile, 50% web).

- Their top AI observability priority is performance regression detection at 93%, which is far above either single-platform group. This priority is consistent with the challenge of shipping across platforms and catching regressions that manifest differently on each.

Interestingly, they rate frontend performance as mission-critical at a notably lower rate (36% vs. 68% mobile, 60% web). One explanation could be that frontend performance is only one of their many priorities (e.g., release stability, cross-platform consistency).

3.6 Huge perception gap between managers and independent contributors (ICs)

If you’re an engineering manager, your perspective likely reflects organizational constraints that span multiple teams (e.g., time, budget, resource allocation). Whereas, if you’re an IC, your experience likely reflects day-to-day tooling and debugging workflows.

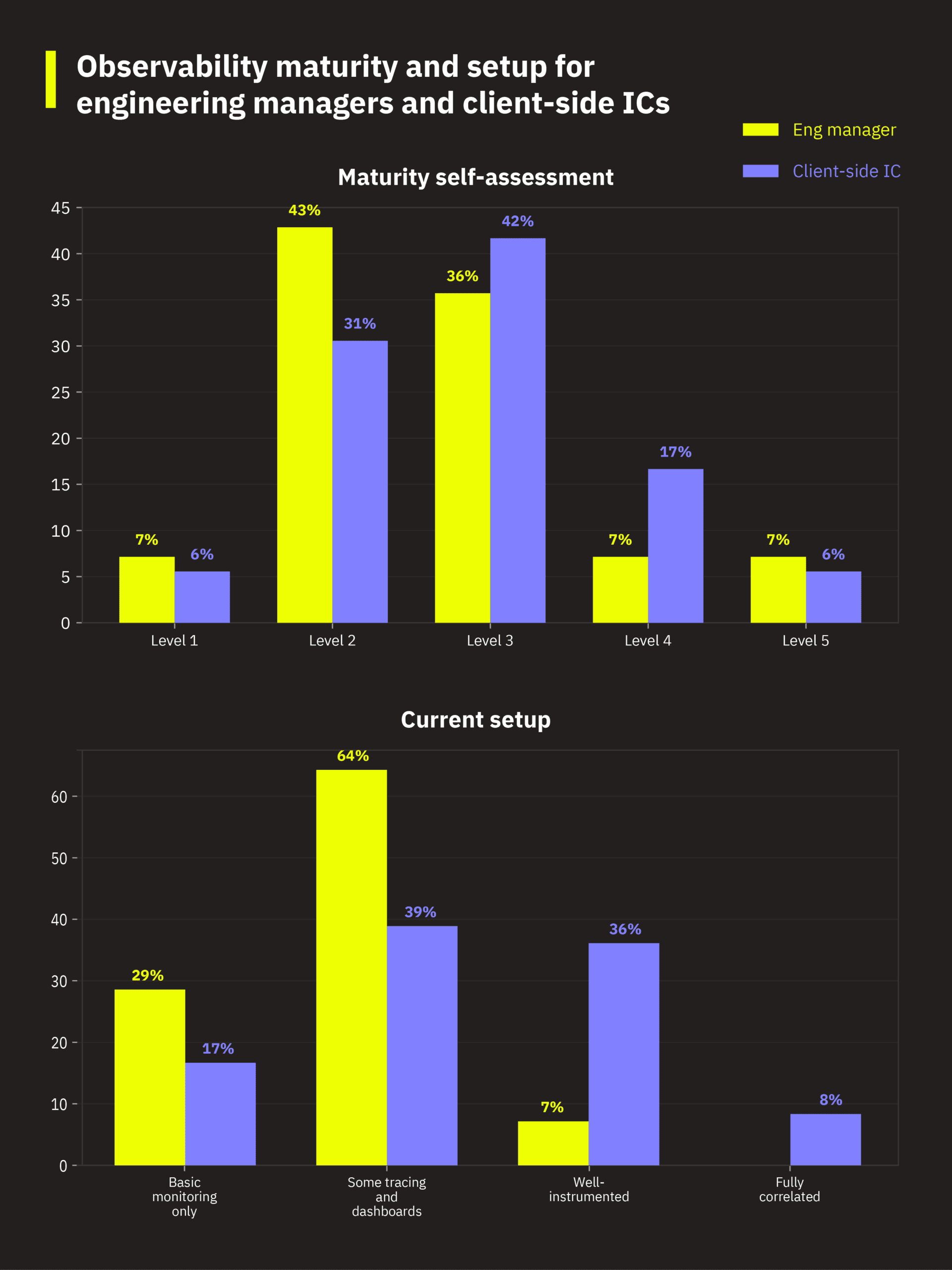

The survey reveals a meaningful perception gap between engineering managers/directors and client-side engineering ICs (comprising frontend web, mobile, and full-stack engineers). Managers rate their teams’ observability maturity lower than ICs do. 43% of managers place their team at level 2 (mostly reactive), compared to 31% of ICs. Managers are also more likely to describe their observability setup as “some tracing and dashboards” (64% vs. 39%), while ICs are five times more likely to report being well-instrumented (36% vs. 7%). Managers see the organizational gaps; however, ICs feel more confident about what’s in their immediate scope.

The confidence data tells the same story. On identifying real user performance issues, 36% of managers are neutral compared to 22% of ICs, while 22% of ICs feel very confident versus just 7% of managers. ICs who work directly in the code either know the systems well enough to be very sure, or they recognize where they have blind spots. Managers, seeing across the full surface area, cluster in a cautious middle.

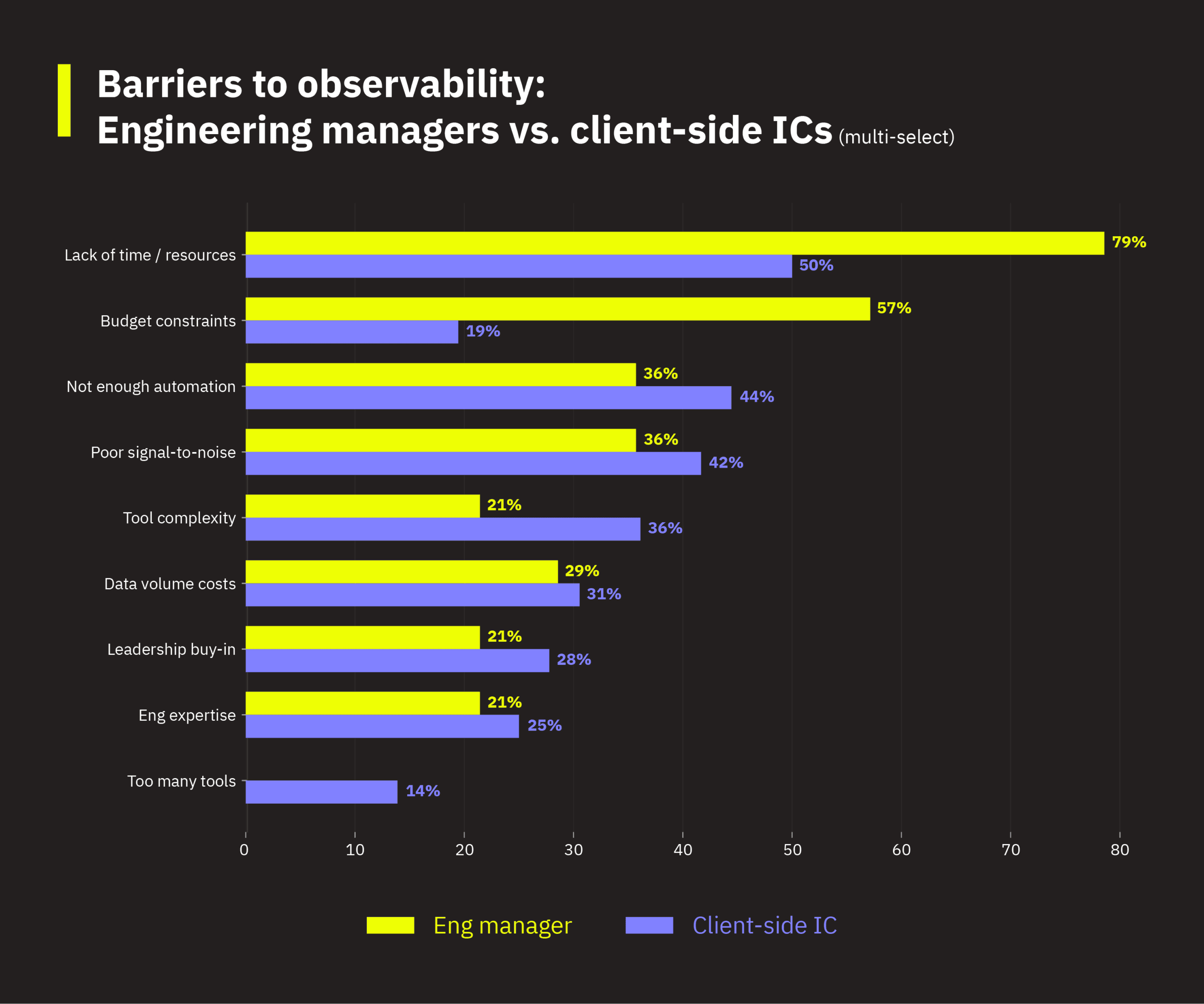

Constraints are split along organizational vs. tooling lines. Managers’ top barrier is lack of time and resources at 79% (vs. 50% for ICs), followed by budget constraints at 57% (vs. 19%). ICs lead on not enough automation (44% vs. 36%) and tool complexity (36% vs. 21%). Understanding which framing resonates within your own team can help bridge the gap between what managers prioritize and what ICs need.

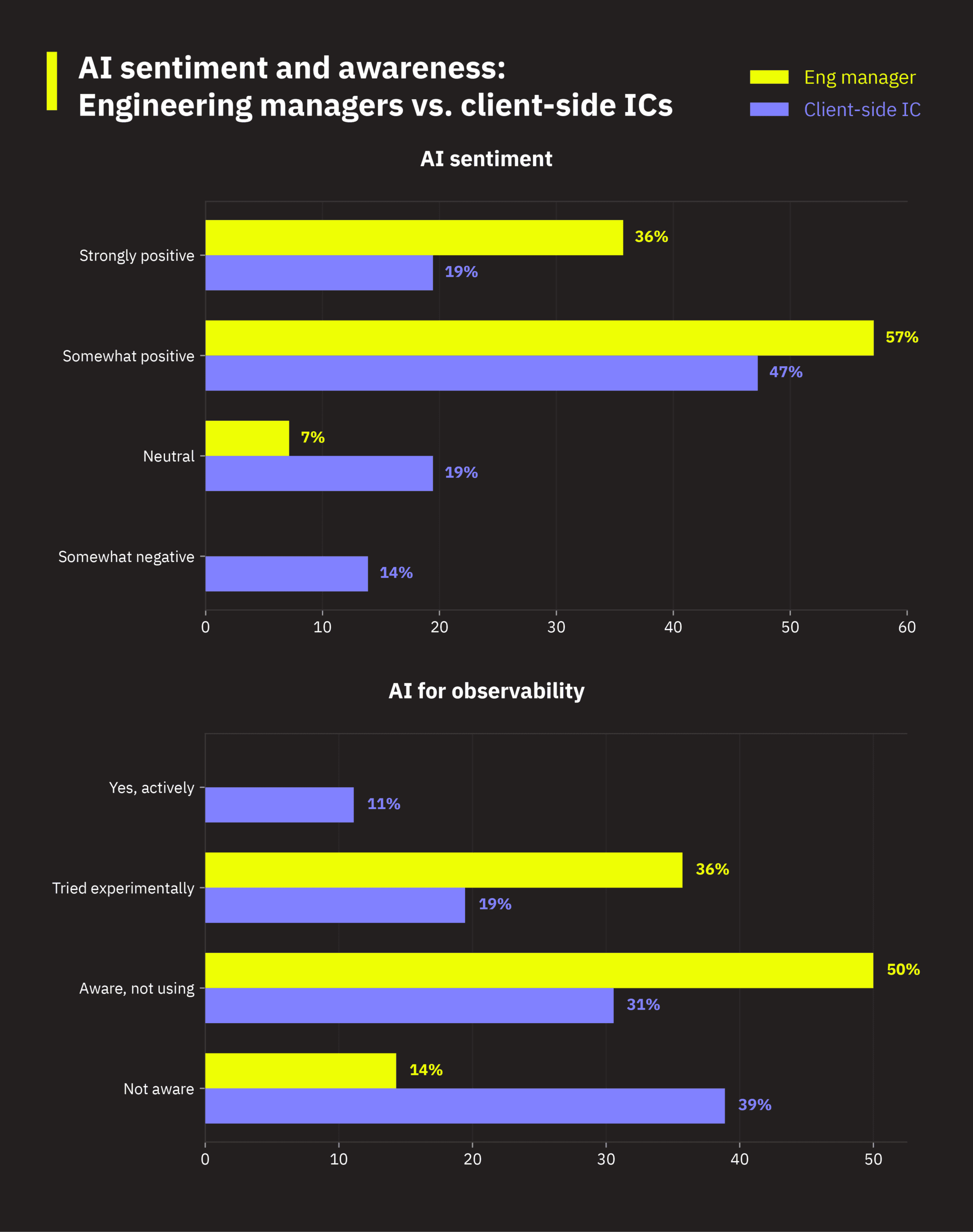

Managers are more uniformly positive about AI (93% positive, 0% negative) compared to ICs (67% positive, 14% negative). Managers use AI daily at a higher rate (64% vs. 50%) and are more likely to believe AI will “fundamentally change workflows” (43% vs. 17%). ICs take a more pragmatic view: 47% see AI as a core engineering tool, and they’re more likely to actually be using AI for observability tasks (11% actively vs. 0% of managers).

There’s also a sizable gap in AI awareness. 39% of ICs aren’t even aware AI can be applied to observability, compared to just 14% of managers. If your engineering leadership is enthusiastic about AI for observability but your ICs seem disengaged, the issue is probably awareness, not resistance.

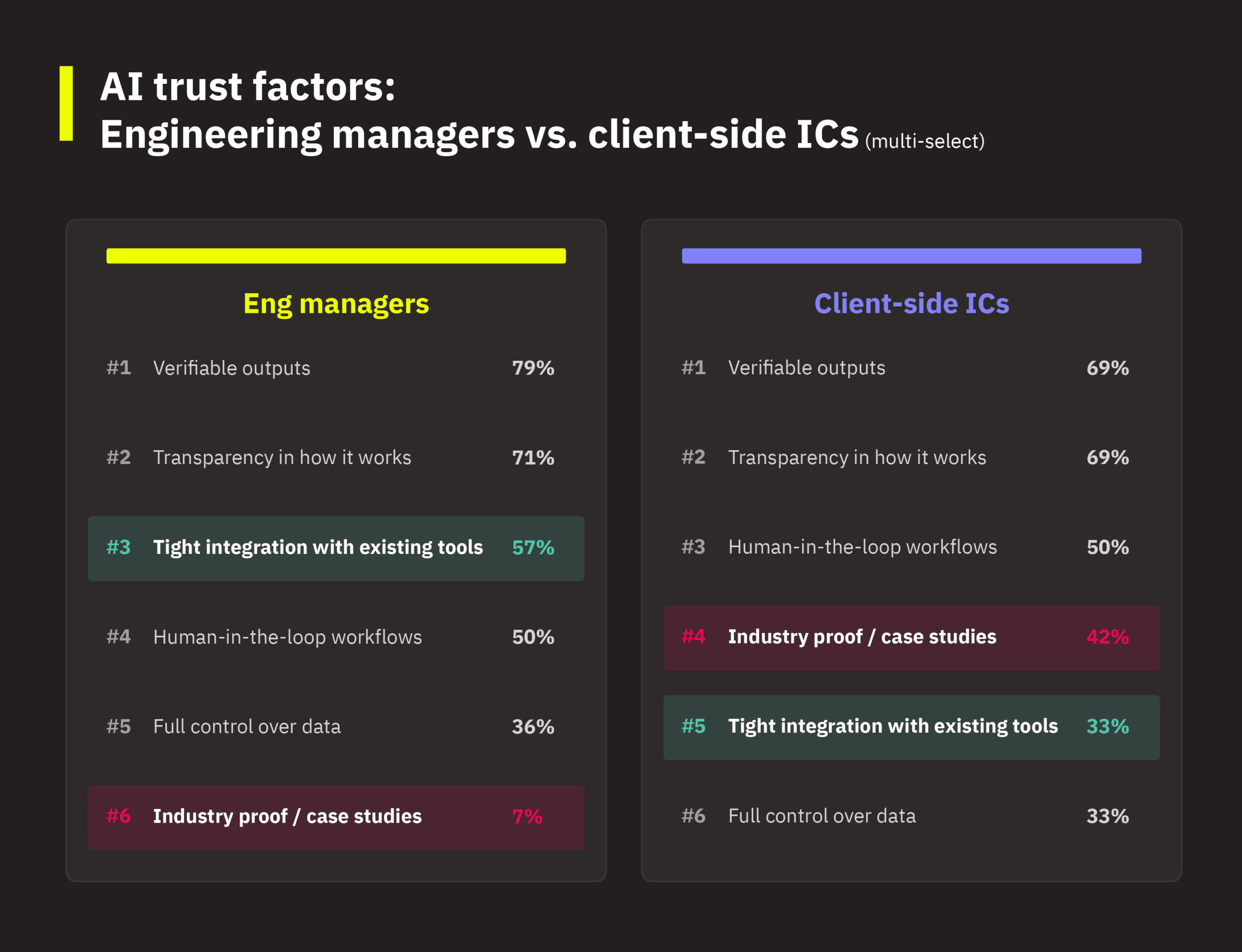

Trust priorities differ, too. ICs want industry proof and case studies at six times the rate of managers (42% vs. 7%). They want to see peer validation before adopting AI. Managers prioritize tight integration with existing tools (57% vs. 33%), reflecting their focus on operational efficiency.

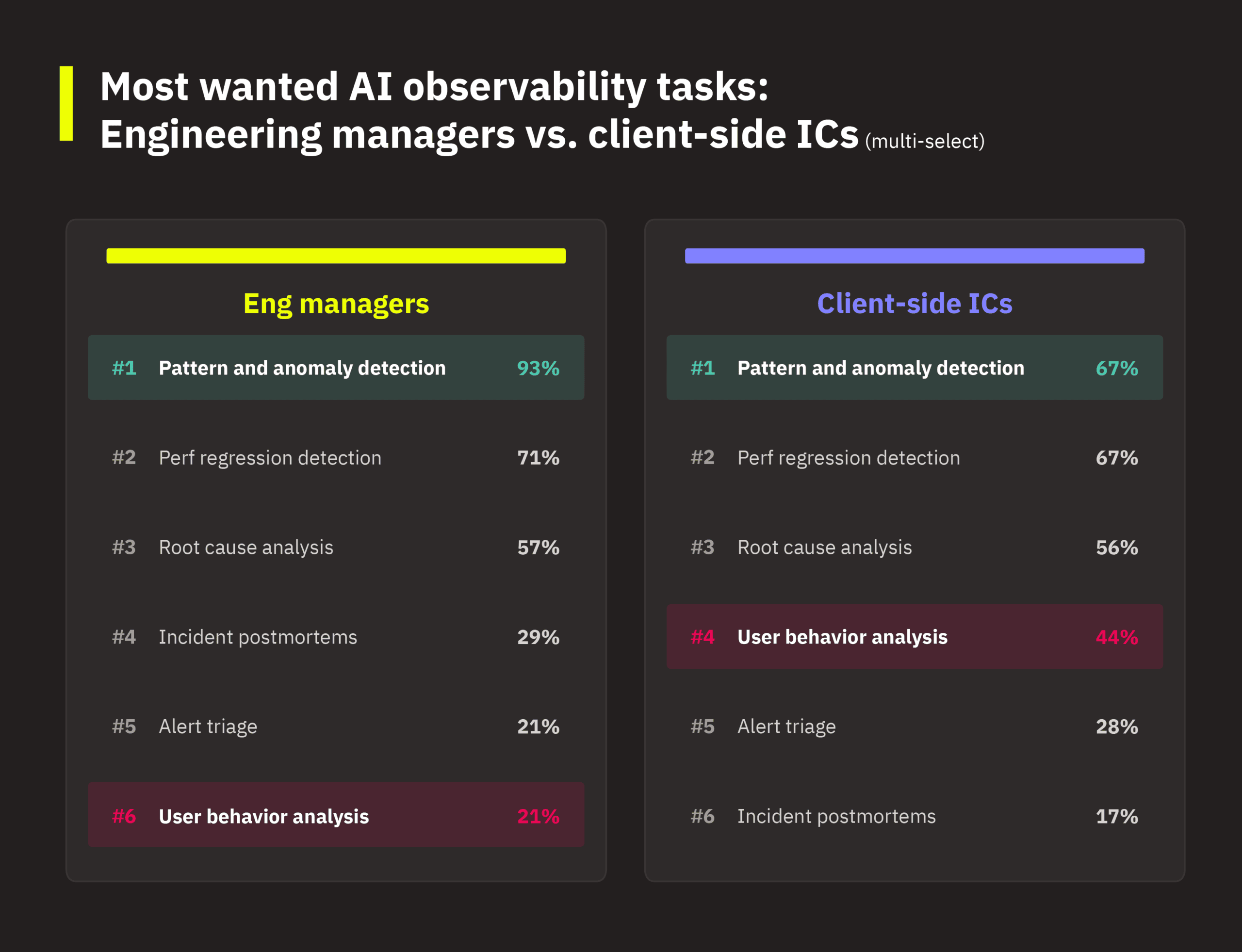

On desired AI tasks, managers heavily favor pattern and anomaly detection (93% vs. 67%), while ICs show notably more interest in user behavior analysis (44% vs. 21%).

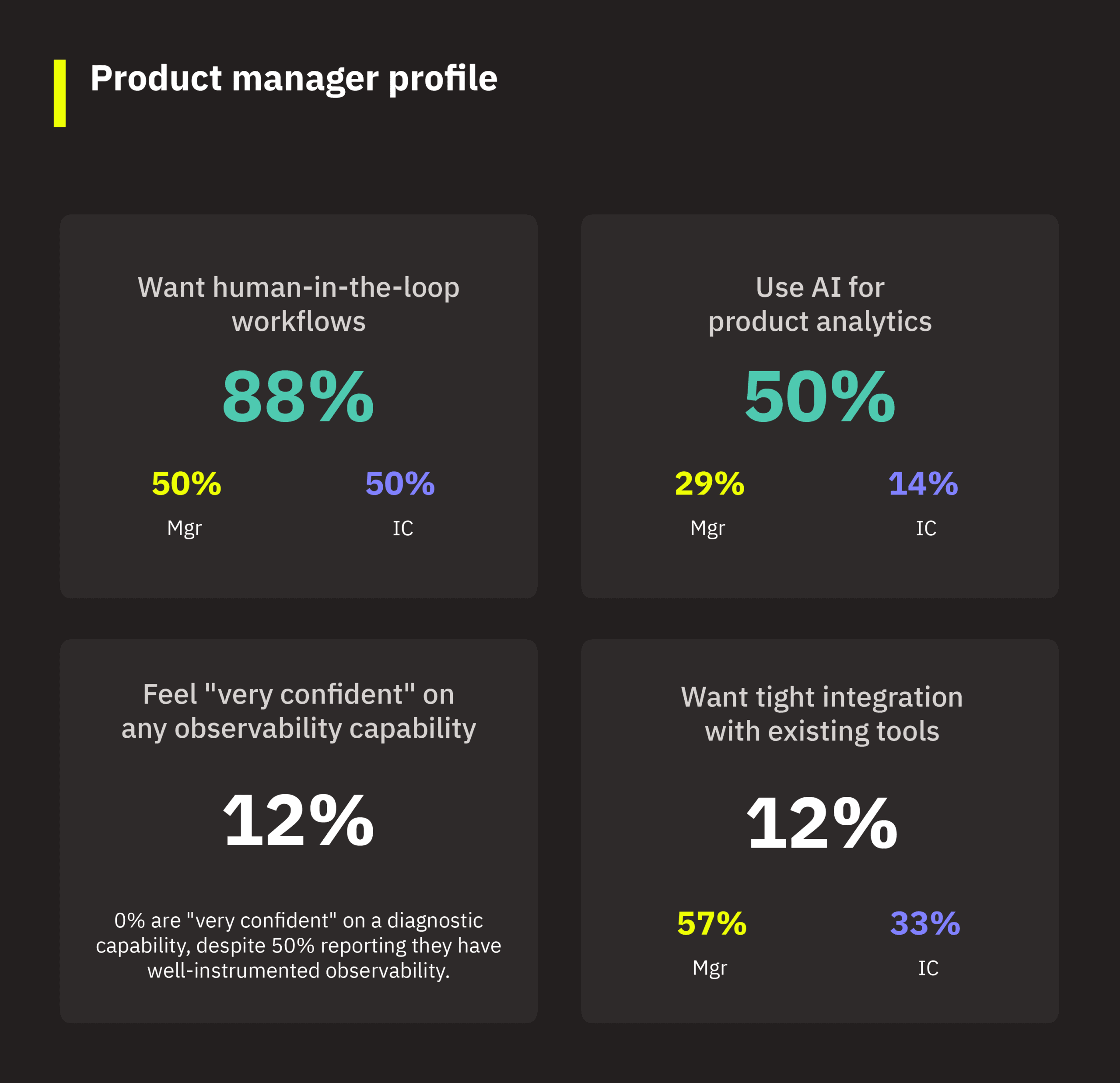

A note on product managers. PMs are 8% of survey respondents, and they offer a third perspective worth calling out. They’re excluded from the manager/IC comparison because they occupy a distinct role. They own product decisions informed by observability data but aren’t directly responsible for instrumentation or infrastructure.

PMs’ most striking differentiator is their emphasis on human-in-the-loop workflows as an AI trust factor: 88% rank it as essential, compared to 50% for both engineering managers and client-side ICs. That’s the single largest role-based gap on any trust factor in the dataset. PMs also use AI for product analytics at 50% (vs. 29% managers, 14% ICs). They’ve already found AI’s value for their own workflow but just haven’t connected it to observability. And despite reporting relatively advanced setups (50% well-instrumented), only 12% of PMs feel “very confident” on any of the four observability capabilities. That suggests observability outputs aren’t yet meeting PM decision-making needs.

4. AI in observability: Readiness, demand, and trust

4.1 The adoption gap

How widely are engineering teams actually using AI? The answer is, very widely… for development. 89% of respondents use AI tools in their workflow: 52% daily and 37% occasionally. Code generation leads at 78%, followed by debugging (63%), code review (57%), and test creation (55%). But only 8% actively use AI for observability. If your team uses AI for code generation or debugging but hasn’t explored it for observability, you’re in the same position as the vast majority of respondents.

This AI-observability gap is stark. 29% of respondents aren’t even aware they can use AI for observability. 28% have only experimented with it, and 35% are aware but haven’t started. Most engineers trust AI enough to generate and debug their code every day, yet they haven’t applied it to their observability practices. As one respondent at observability maturity level 4 put it, AI for observability needs to be productized and publicized with case studies to gain traction. The tooling simply isn’t visible yet to most teams.

4.2 What excites and concerns engineers

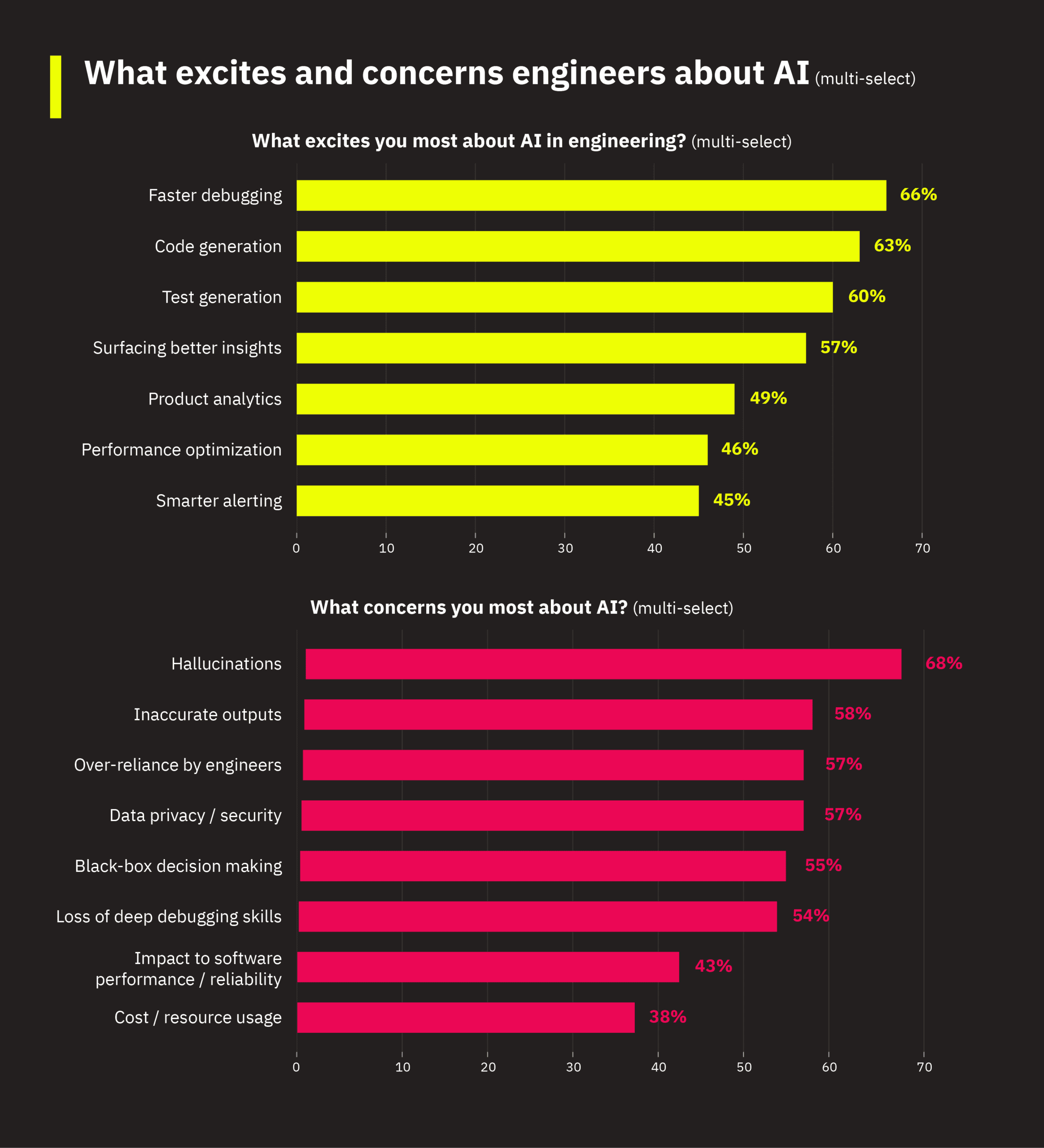

Engineers are most excited about using AI to improve developer productivity. Faster debugging (66%), code generation (63%), and test generation (60%) lead the list.

But there are significant concerns about AI. Hallucinations lead at 68%, followed by inaccurate outputs (58%), over-reliance by engineers (57%), and data privacy/security (57%). One respondent distilled the hallucination concern into two words: “confident incorrectness.”

That’s a useful way to describe the uncertainty in blindly accepting AI responses. When applications and systems are experiencing outages, engineers are under significant time pressure to resolve issues and restore service. AI-powered observability that provides confidently wrong answers may be worse than getting no answers at all.

It’s worth noting that 54% of respondents worry about AI adoption leading to a loss of debugging skills. This concern takes on extra weight for teams whose observability depends heavily on complex, manual investigations.

4.3 Earning trust: Different priorities for different maturity levels

What would it take for teams to trust AI in observability? Three factors dominate: verifiable outputs (69%), transparency in how it works (66%), and human-in-the-loop workflows (57%).

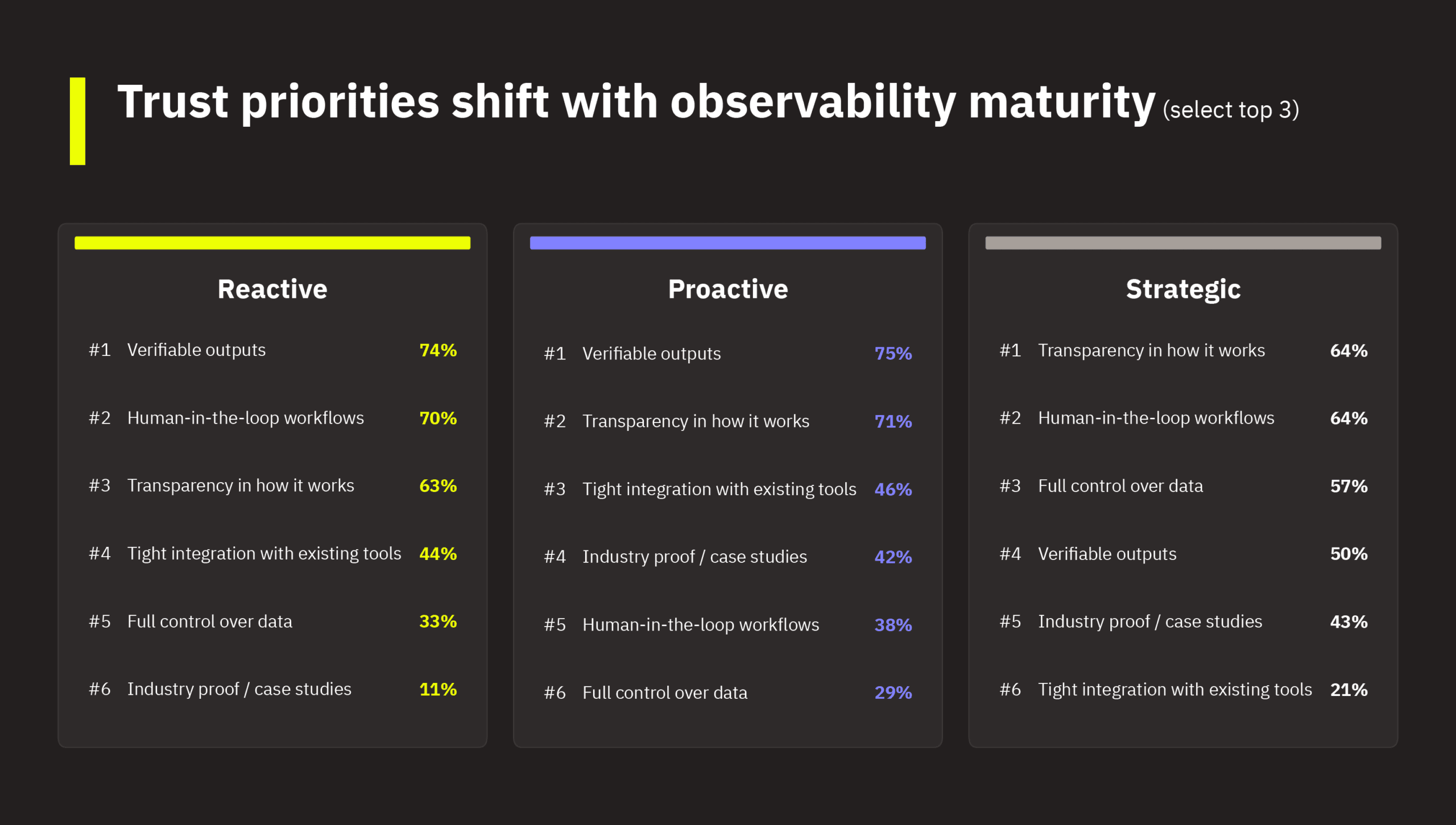

And trust priorities shift with maturity:

- Reactive teams (levels 1 or 2) prioritize verifiable outputs (74%) and human-in-the-loop (70%). They want guardrails because they lack the observability foundation to independently validate AI outputs.

- Proactive teams (levels 3 or 4) are the least likely to demand human-in-the-loop workflows (38% vs. 70% for reactive teams) but the most likely to want industry proof and case studies (42% vs. 11%). They’re past needing guardrails but not yet ready to commit — they want to see it work for peers first.

- Strategic teams (level 5) are the only group where data control ranks in the top three (57%), and they’re far less concerned about tool integration (21%) because their toolchains are already integrated. Their focus has shifted to governance.

Even positive respondents consistently frame AI as a supplement, not a replacement (e.g., “human oversight is critical,” “transparent, verifiable, and tightly integrated,” “keeping AI off the hot path”).

For teams not yet aware of AI for observability, industry proof and case studies are the top factor that would build confidence (47% vs. 13–30% for other groups). Peer examples carry more weight than feature lists when you’re still evaluating whether this category is real.

4.4 Demand for AI help exceeds the confidence gap in every capability area

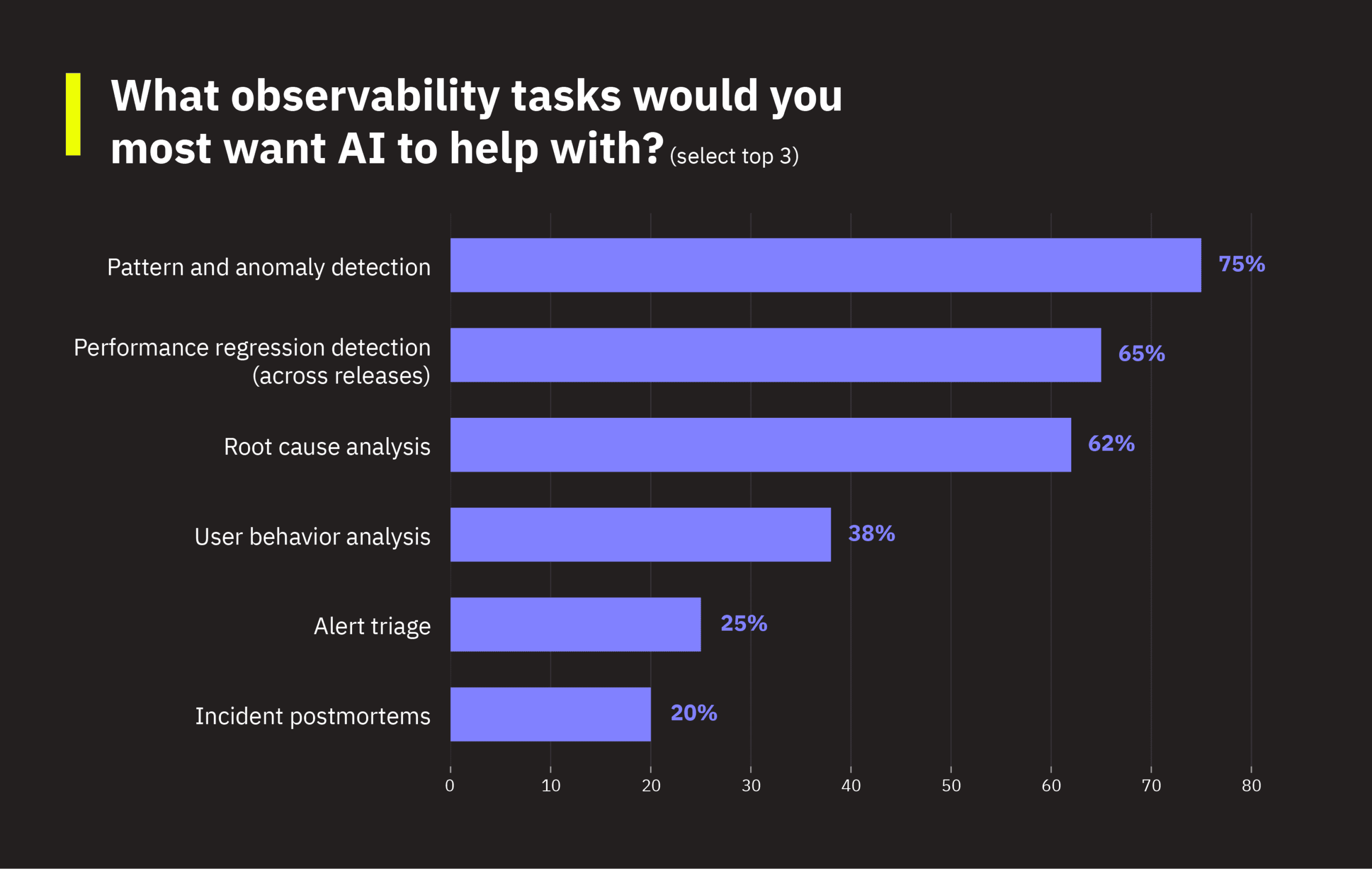

The top three tasks respondents want AI to help with are pattern and anomaly detection (75%), performance regression detection across releases (65%), and root cause analysis (62%). These map directly to the explanation gap, where teams can notice something is wrong, but they can’t determine what is causing it or why it’s happening.

Here’s the finding that surprised us most: Demand for AI help exceeds the confidence gap in every capability area. Pattern/anomaly detection shows a 2X multiplier. 37% lack confidence in identifying real user issues, but 75% want AI to help. Even teams that feel confident in their current capabilities see AI as a way to do more and move faster. For these teams, AI is not a crutch; it’s a force multiplier. And 68% of respondents want it embedded in a mix of their development tools and observability platform. They want AI connected to their existing workflows, not a separate tool that requires context-switching to access.

5. Key takeaways

Most frontend engineering teams are stuck in an observability middle. They can detect that something is wrong, but they struggle to explain why. The weakest capability across the board is tying frontend issues to backend causes, and the most-demanded AI use cases all target this explanation gap. Engineers already use AI daily for code generation and debugging, but most haven’t encountered it applied to observability yet.

There are key observability differences depending on your platform. Web teams are further along in both instrumentation and AI experimentation, while mobile teams face a harder diagnostic problem with less mature tooling. Managers see the resource constraints; ICs see the tooling gaps. And even confident teams want AI assistance. It’s not about filling gaps, but instead is about moving faster.

- Most teams (74%) are stuck in the observability middle. They have some instrumentation but lack full end-to-end visibility. The explanation gap (i.e., the ability to tie frontend issues to backend causes) is the most common pain point.

- AI for observability is not yet on most engineers’ radar. 29% aren’t aware it’s an option, and another 35% are aware but haven’t started. This contrasts with general AI adoption, with 89% of respondents actively using AI tools in their workflows. 72% believe AI will be important to observability within 2–3 years.

- Trust must be earned through transparency. Verifiable outputs, explainability, and human-in-the-loop are necessary for increased adoption of AI for observability. More advanced teams also demand data control and governance.

- Mobile and web teams face fundamentally different challenges. Mobile has more teams at basic monitoring (26% vs. 7%) and has a stronger demand for root cause analysis. Web teams have more advanced observability setups but face organizational buy-in challenges. Web teams are also 2.7 times more likely to be aware of AI for observability and 2.5 times more likely to have experimented with it.

- Managers and ICs view observability constraints differently. Managers see resource and budget constraints; ICs see tooling and automation gaps. If your leadership and ICs seem misaligned on observability priorities, they may be describing the same gap from different vantage points.

- Engineers want AI woven into their existing workflow. 68% prefer AI observability embedded across their dev environment and observability platform. AI task demand exceeds the confidence gap in all four observability capability areas. In other words, teams want AI to move faster, not just fill gaps.

Perhaps most surprising is that across all dimensions, most teams share similar patterns. They have partial observability maturity, limited end-to-end visibility, high AI adoption in development but low adoption in observability, and strong demand for better diagnostic capabilities. The differences are mostly platform (mobile vs. web) and role (manager vs. IC), not overall readiness. If you struggle with observability, your challenges are widely shared.

Now that you know where frontend engineering teams stand on observability and AI, let’s look at what you can do to close the gaps that matter most for your team.

6. Now what: Leveling up your observability maturity

Most teams fall into one of the three profiles identified earlier in this report. Wherever you are, the path forward isn’t about overhauling your stack or adopting every new tool. It’s about identifying the next constraint holding your team back and solving it well. The throughline across all three profiles is the same: Better observability means connecting what’s happening in your app to what it means for your users and your business.

It’s important to note that these profiles aren’t permanent. As your observability practice matures, you may outgrow one profile and start recognizing your team in another. The action items below are designed to help you get there.

6.1 If you’re a “stuck in the middle” team

You have some dashboards and tracing, but when something breaks, your team is still piecing together the story manually. Your biggest constraint is time and resources, and AI for observability probably isn’t on your radar yet. Roughly 74% of respondents fit this profile, so if this is you, you’re in good company.

- Close the explanation gap. Your weakest capability is connecting frontend issues to backend causes. Before adding new tools, focus on getting end-to-end visibility into your most critical user flows (e.g., login, checkout, app startup). If your team can detect a problem but can’t quickly determine what caused it and who’s affected, that’s the highest-leverage gap to close.

- Streamline your debugging workflows. If your team spends hours stitching together logs, traces, and dashboards to diagnose a single issue, that’s your biggest time sink. Identify the 2–3 most common investigation paths and standardize them. Reduce the number of steps between “something is wrong” and “here’s why.”

- Start connecting performance to user impact. Too many teams measure system metrics in isolation. Even at an early stage, you can start asking whether the metrics you’re tracking reflect what users actually experience. When an alert fires, can your team tell which users were affected and whether it matters? That habit of connecting performance signals to user outcomes is what separates teams that debug reactively from teams that prioritize proactively.

- Start exploring AI as a force multiplier. Your team already uses AI daily for code generation and debugging. The same instinct applies here. Pattern and anomaly detection is the lowest-friction entry point, and it doesn’t require rebuilding your observability foundation to start. Keep humans in the loop and focus on augmenting existing workflows, not replacing them.

Your goal isn’t a complete maturity jump out of the gate. Instead, focus on converting reactive workflows into proactive ones. You’ll know you’re making progress when your team can quickly explain why issues are happening, which users are impacted, and whether they’re a priority. If you’re still only detecting that something is wrong, you have room to grow.

6.2 If you’re an “advanced but constrained” team

Your instrumentation is strong and you’ve started experimenting with AI. But you’re blocked by organizational complexity: budget pressures, data volume costs, tool sprawl, and governance questions. You’re in the top 14% on maturity, and your next challenge is making observability work at scale across your organization. You’ve solved “can we see what’s happening?”, and now you’re stuck on “can everyone act on what we see?”

- Tie observability to business outcomes. At your maturity level, technical visibility alone isn’t enough. Connect performance metrics to the numbers leadership cares about: conversion rates, retention, and revenue impact. Build shared dashboards that engineering, product, and leadership can all read. When you can show that last week’s checkout latency spike cost $X in abandoned carts, the budget conversation changes overnight.

- Consolidate before you add. Tool sprawl is one of your defining constraints. Every overlapping tool means another login, another data silo, another tab your on-call engineer has to check at 2AM. Before evaluating new capabilities, audit whether your current stack can do more with better configuration or consolidation.

- Operationalize AI, not just experiment. Your team has probably tried AI for a few one-off investigations. The next step is embedding it into repeatable workflows: automated triage that surfaces likely causes before an engineer starts investigating, or regression detection that flags performance changes within minutes of a release. But scaling AI requires trust guardrails up front. Your peers in this profile prioritize data control (57%) over almost every other trust factor. Before you embed AI into daily workflows, get clear on which outputs need to be verifiable, where a human stays in the loop, and what data AI can access. That governance work is what builds the internal credibility to expand AI from a pilot to a standard part of your workflow.

- Align your managers and ICs on where to invest. The data shows managers at your maturity level cite budget and resource constraints while ICs cite tooling and automation gaps. They’re both right. They’re just describing the same bottleneck from different sides. An explicit conversation about shared priorities can prevent teams from working at cross-purposes.

Your goal is to make observability a system that informs business and engineering decisions, not just a debugging tool. You’ll know you’re making progress when performance conversations happen in terms of user and business impact, and when AI-assisted workflows are part of your team’s daily routine rather than occasional experiments.

6.3 If you’re an “emerging mobile” team

Your crash reporting is solid, but deeper performance diagnostics are a challenge. You have lower observability maturity overall, limited awareness of AI for observability, and strong demand for root cause analysis. Mobile’s fragmented device and network landscape makes your diagnostic problem harder than what most web teams face.

- Expand beyond crash reporting into real user performance. Crash detection tells you something broke. Real User Monitoring tells you whether it mattered, for whom, and under what conditions. Only 37% of mobile teams use RUM, compared to 87% of web teams. That gap explains why nearly half of mobile engineers feel uncertain about what users are actually experiencing. Closing it is the single most impactful investment you can make.

- Cut through the fragmentation noise. Mobile’s biggest diagnostic challenge isn’t a lack of data. It’s too many variables. Thousands of device, OS, network, and app version combinations mean every issue looks unique. The teams that level up fastest are the ones that stop treating every crash as equally urgent and start grouping issues by user impact. A crash affecting 0.1% of users on an end-of-life device is a different priority than a slow startup affecting your highest-value user segment.

- Prioritize root cause analysis as your AI starting point. Mobile respondents ranked it their #1 desired AI task at 79%, the highest of any segment. Without it, your engineers are the root cause engine, manually reconstructing what happened for every issue. AI doesn’t replace that expertise. It gives your team a head start by narrowing the search space so engineers spend their time validating and fixing instead of endless tool jumping.

- Seek out peer validation before committing. Mobile teams rank industry proof and case studies as a top trust factor (47% vs. 10% for web). Look for teams with similar mobile stacks who have adopted observability tooling or AI-assisted diagnostics. Their experience will tell you more than any feature comparison.

Your goal is to shift from knowing when your app crashes to understanding why users are having poor experiences before those experiences lead to churn. You’ll know you’re making progress when your team can diagnose issues by user segment, device, and release, and when “it works on my device” stops being an acceptable answer.

6.4 Across all teams: Start with AI that augments, not replaces

Regardless of your profile, the survey data points to a clear starting path for AI in observability.

Start with the use cases that map to your biggest gaps. Pattern and anomaly detection (75%), performance regression detection (65%), and root cause analysis (62%) are the three most-demanded tasks across all respondents. They also map directly to the explanation gap that runs through this entire dataset. If your team can detect issues but struggles to explain them, these are the AI capabilities that will have the most immediate impact.

Build trust deliberately. Engineers aren’t opposed to AI. 77% view it positively. But they demand verifiable outputs (69%), transparency in how it works (66%), and human-in-the-loop workflows (57%) before they’ll rely on it for observability. Start with AI that augments your existing debugging and investigation workflows rather than replacing them. Keep humans on the critical path, especially early on.

Embed AI into the tools you already use. 68% of respondents want AI observability integrated across their development environment and observability platform. Avoid standalone tools that require context-switching. The goal is to make AI a natural extension of how your team already works, reducing the time from “something is wrong” to “we know exactly why, who’s affected, and what to do about it.”

7 Closing thought

The explanation gap is real, it’s shared, and it’s solvable. Wherever your team is today, the next step is the same: Connect what’s happening in your app to what it means for your users. Everything else follows from that.